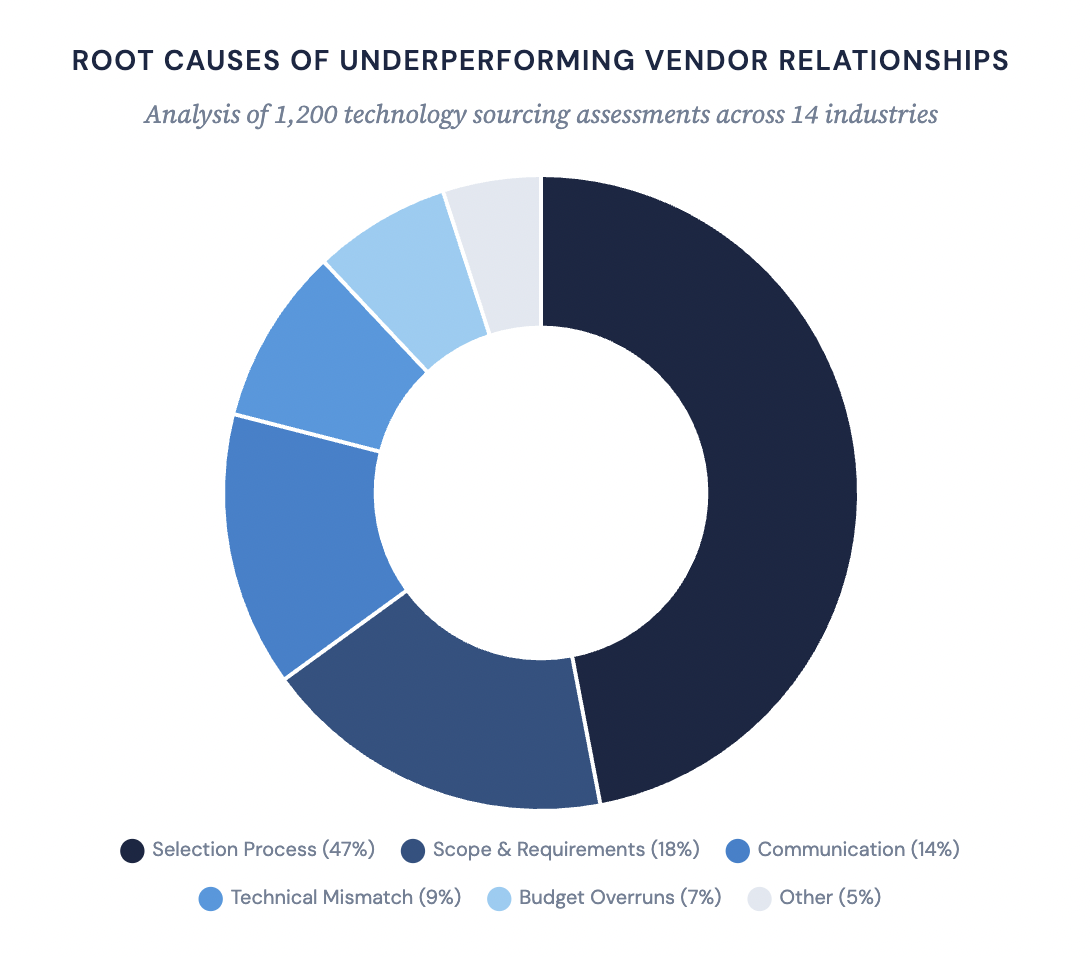

Selecting the right technology partner is among the most consequential strategic decisions an organization will make in any given fiscal cycle. A poor choice doesn’t just derail a single project—it creates compounding technical debt, erodes stakeholder confidence, and can set digital transformation efforts back by years. According to our internal engagement data spanning 1,200 technology sourcing assessments across 14 industries, approximately 47% of vendor relationships classified as “underperforming” at the 18-month mark can be traced to deficiencies in the selection process itself—not to post-contract execution failures. This guide codifies the evaluation methodology we apply across engagements with Fortune 500 clients, distilling it into a repeatable, risk-adjusted framework for IT vendor selection that addresses the full lifecycle from initial needs definition through post-deployment governance.

Root causes of underperforming vendor relationships based on 1,200 technology sourcing assessments across 14 industries

Defining Project Needs

Before approaching any vendor, clearly define your project objectives, scope definition, budget, timeline, and expected outcomes. This means drafting a requirements specification that captures both functional needs and technical requirements, agreeing on smart goals tied to measurable results, and ensuring stakeholder communication is structured from day one. Before embarking on the journey to find a software development partner, it’s crucial to clearly understand your project’s objectives, scope, and timelines.

Articulating Project Objectives

The sponsoring organization must answer a deceptively simple question before writing any technical specification: What business outcome are we paying for? It’s a statement of strategic intent—whether that’s reducing customer acquisition cost by 30%, compressing order-to-fulfillment cycle time, standing up a net-new digital revenue stream, or achieving regulatory compliance ahead of an enforcement deadline.

Project objectives should be formulated as SMART goals—Specific, Measurable, Achievable, Relevant, and Time-bound. Expert tip: Set smart goals and timelines to help you clearly communicate your needs to potential vendors. Clearly understand your project’s goals, scope, timelines, and primary objectives. Outline your project goals, desired features, and any specific technical requirements.

Common failure modes at this stage include conflating project objectives with technical requirements (“we need a microservices architecture” is not a project objective), defining goals so broadly that they resist measurement (“improve customer experience”), and failing to establish priority ordering among competing objectives.

“I consistently pose a straightforward question to clients: if a project meets its deadlines and budget, but fails to significantly impact any performance metric, can it be considered successful?” The answer forces clarity: “No requirement specification can.”

Scope Definition and Requirements Specification

At this stage, the organization must produce a clear scope definition—a delineation of what is and what is not within the project boundary. Scope definition is equally vital—it sets the boundaries for what is and isn’t part of the project.

The functional scope defines the features, capabilities, and user journeys that the solution must support. Organizational scope clarifies which business units, geographies, and user populations are included.

Scope definition must be accompanied by a requirements specification that translates the boundary into specific, testable items. The requirements specification should cover functional requirements, technical requirements (platform, language, framework, and infrastructure constraints), integration with existing systems (data formats, protocols, and middleware), and non-functional requirements (scalability, availability, and security). Pay particular attention to integration with existing systems—these interfaces are among the most common sources of delay and cost overrun in enterprise software development. For example, organizations that need to migrate from Excel to custom software often underestimate the complexity of data migration and integration requirements.

The requirements specification should also address development methodology expectations: Agile (Scrum or Kanban), Waterfall, or a hybrid methodology. It’s crucial to understand the vendor’s development methodologies to ensure they align with your project’s needs. The development methodology can greatly impact project timelines, costs, and the final product. Selecting a software development company whose methodology aligns with your project goals is crucial for a successful outcome.

For your project to succeed, you must choose a software development company whose approach aligns with your project objectives. Evaluate their development methodologies: understand their development process and methodologies to ensure they match your project’s needs.

We frequently encounter organizations that attempt to define scope solely through a requirements specification. Requirements describe what the system should do; scope definition establishes the boundary. A 300-line requirements specification without a clear scope definition is an invitation for interpretation disputes that will consume the project budget.

Clearly define project scope and deliverables: outline project goals, scope, and timelines to eliminate ambiguity, prevent misunderstandings, and ensure contract terms align with project objectives. Once you’ve set your project’s objectives, scope, and timelines, the next step is to begin the search for a suitable software development company. Vague or incomplete proposals should be a red flag—proposals should clearly outline the scope of work, deliverables, timelines, and costs.

“Scope definition is a contract between the organization and itself, long before it becomes a contract with a vendor. If your teams can’t agree on what’s in and what’s out, you’re outsourcing a political problem, not a technical one.”

Establishing Budget Parameters

Determining budget and understanding cost structures: Establishing a clear budget at the outset of a project is fundamental. For a detailed breakdown of cost drivers and engagement models, see our guide on custom software development pricing. For organizations with annual budget cycles, multi-year projects require explicit budget commitments that span fiscal-year boundaries—a frequent point of failure when budget approval processes are misaligned with project timelines. Set smart goals and timelines to help you clearly communicate your needs to potential vendors. This way, you can budget effectively and make sound financial decisions throughout the project lifecycle.

“We advise clients to think of their budget not as a ceiling but as an investment thesis. What’s the expected return? What’s the cost of delay? Could you please consider the potential cost of inaction?”

Defining Timeline and Milestones

Setting realistic timelines and milestones is crucial for tracking progress. Timelines should be derived from business imperatives (regulatory deadlines, market windows, fiscal year planning, competitive pressures) rather than arbitrary targets. Build in explicit milestones—discovery sign-off, architecture review, MVP delivery, integration testing, and go-live readiness assessment—with clearly defined acceptance criteria at each stage.

A well-structured timeline distinguishes between strict deadlines (driven by external factors such as regulatory enforcement dates or contractual obligations) and soft deadlines (internally set targets that carry consequences but can be adjusted). This distinction is critical for vendor evaluation: a vendor who proposes to compress a soft deadline should be assessed differently from one that claims to meet a hard deadline that other qualified bidders have flagged as aggressive.

Milestones help monitor progress and ensure the project stays on track. Companies should provide a detailed timeline, including specific deadlines for each project phase. The company should provide a thorough timeline with precise due dates for each project phase. Establish milestones and a payment schedule: link payments to completing specific milestones. Plan for contingencies and exit strategies: develop a contingency plan for potential project cancellation, failure to meet critical milestones, or the need to switch to a different vendor.

We recommend milestones at 4–6 week intervals, each tied to specific deliverables from the requirements specification and measurable against predefined performance metrics.

Stakeholder Communication and Performance Metrics

Equally important is designing the stakeholder communication framework—how project status is reported, how decisions are escalated, and how changes to scope definition or requirements specification are communicated and approved.

This alignment also helps clearly define expectations, milestones, and feedback loops. A clearly defined project plan ensures that all stakeholders are in agreement and promotes efficient communication, thereby increasing the likelihood of meeting milestones on time. Define stakeholder communication protocols before the vendor is selected: weekly status reports, biweekly steering committee updates, designated points of contact on both sides, and clear escalation paths.

Define KPIs and performance metrics before vendor negotiations begin so they can be embedded into contractual SLAs. Establish clear performance metrics for monitoring project progress and team productivity. Outline quality and performance metrics: lay out specific, measurable criteria for evaluating performance and quality of work delivered. We’ll help ensure they align with your project goals, adhere to your timelines, and remain within budget through precise software project estimation.

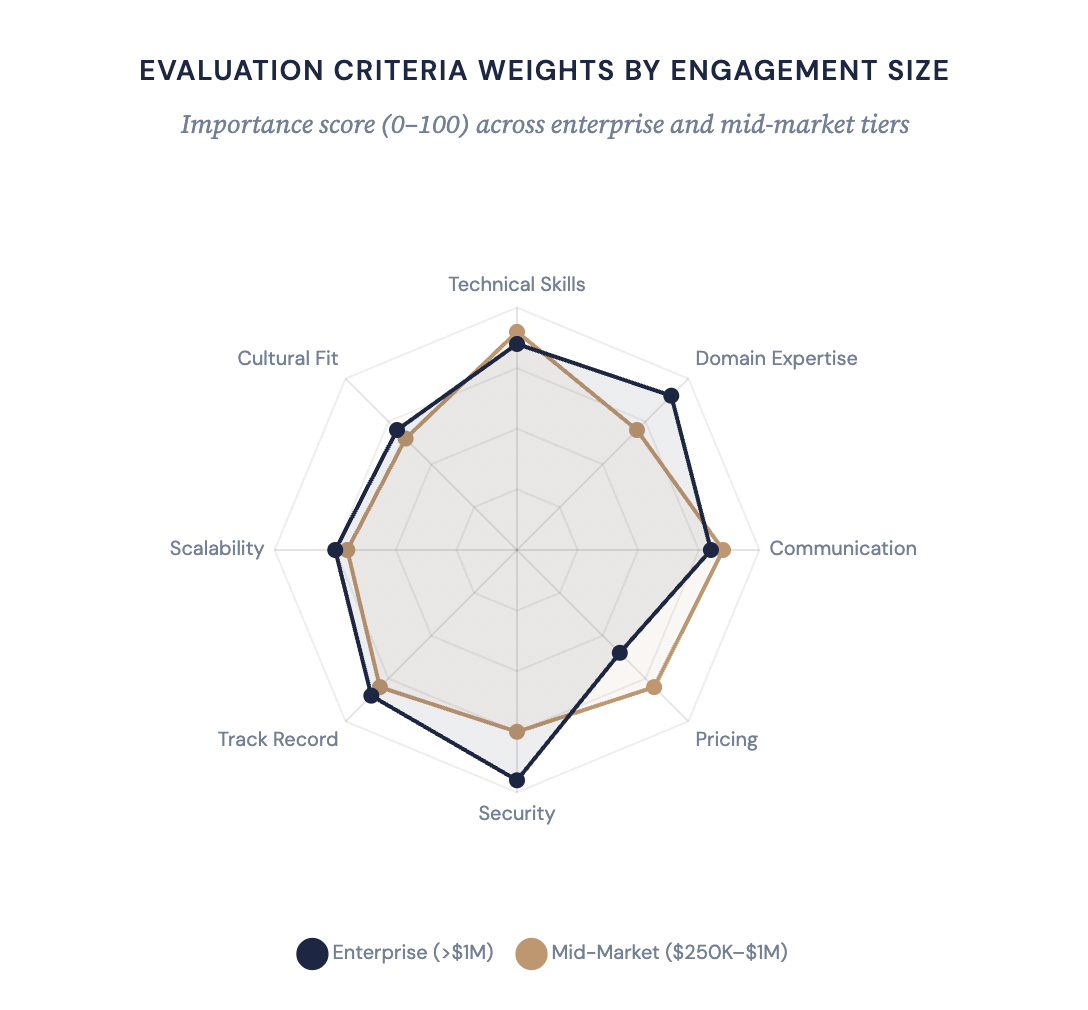

Evaluation criteria weights by engagement size: importance score (0–100) across enterprise and mid-market tiers

Researching and Shortlisting Vendors

The research and shortlisting phase is fundamentally an exercise in information asymmetry reduction—the vendor knows more about their capabilities than you do, and your job is to close that gap as efficiently as possible. That means working through business marketplace websites, client reviews, referrals, and third-party sources to build an evidence-based comparison grid before any commercial conversation begins.

“The most expensive mistake in vendor selection isn’t picking the wrong vendor. It’s limiting your aperture to vendors you already know. That’s selection bias operating at the enterprise level, and it routinely leaves better-fit partners on the table. We had a client last year who was about to sole-source a $4M engagement to their incumbent. Through a proper market scan, we identified a firm with deeper domain expertise, a better-fit delivery model, and pricing that came in 25% lower.”

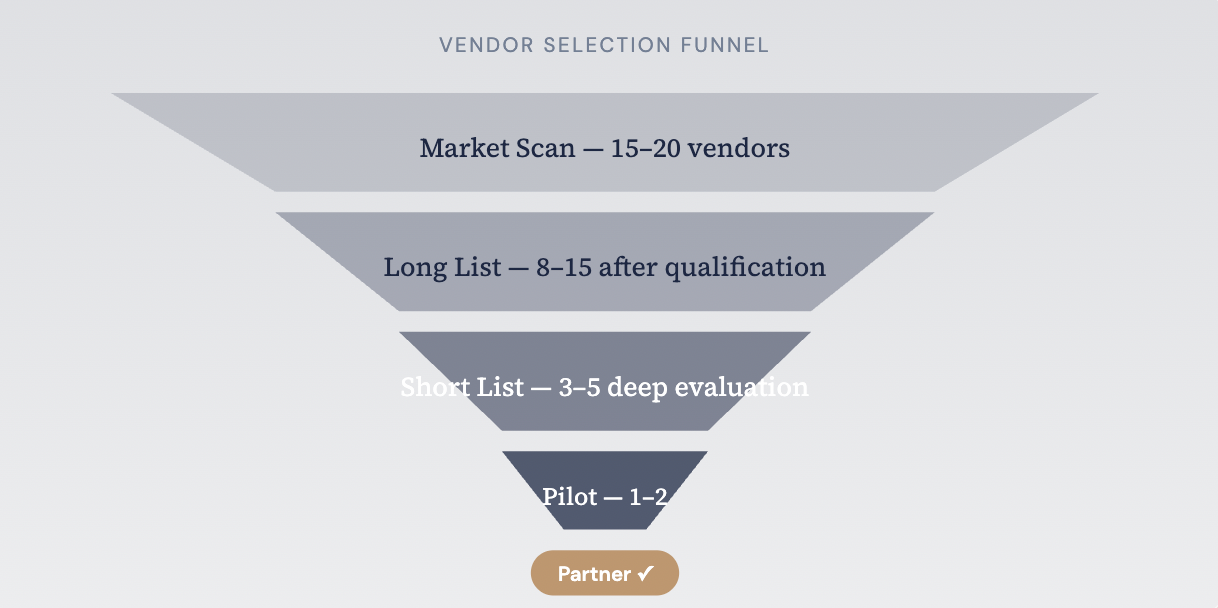

Vendor selection funnel: from market scan through qualification, deep evaluation, and pilot to final partner selection

Leveraging Market Intelligence

Search business marketplace websites like Clutch, Capterra, G2, and DesignRush to compare vendors by specialisation, pricing models, and verified client reviews. Check whether the vendor actively contributes to open-source projects; consistent open-source contributions are a reliable indicator of technical skills depth and genuine commitment to the craft. Cross-reference what you hear from referrals against client reviews on business marketplace websites to identify patterns.

“Analyst reports are a starting point, not a verdict. Gartner and Forrester evaluate vendors against their own criteria, which may not map to yours. We’ve placed clients with vendors in the ‘niche players’ quadrant who turned out to be the perfect fit because the evaluation axis that mattered most to the client wasn’t one the analyst weighted heavily.”

Structuring the RFP Process

The RFI is a lighter-weight instrument used to gather preliminary information and refine the short list; the RFP is a comprehensive solicitation that forms the basis for commercial negotiation.

“The best RFPs I’ve seen are the ones that give vendors enough room to demonstrate creative thinking while providing enough structure to enable fair comparison. An overly prescriptive RFP tells vendors what to build; a well-designed one tells them what problem to solve and lets the proposed approach become a differentiator.”

Moving from Long List to Short List

A typical long list comprises 8–15 vendors. Through qualification screening and preliminary desk research, this narrows to a short list of 3–5 candidates. Run each candidate through your due diligence checklist and update the comparison grid with findings. Prioritise industry experience, project management methodology, and portfolio and domain expertise. For larger engagements, start with a pilot project to test the vendor’s delivery before committing at scale.

Avoiding Common Shortlisting Pitfalls

Three patterns consistently undermine the shortlisting process. First, incumbency bias—favouring the existing vendor because switching costs feel high. Second, recency bias—overweighting a compelling sales presentation over systematic evaluation. Third, relationship bias—including vendors on the short list because a senior stakeholder has a personal connection, bypassing qualification criteria.

“Shortlisting is where discipline either holds or breaks down. We’ve seen evaluation committees expand the short list to seven or eight vendors because someone in the room had a personal relationship with a firm that didn’t qualify. Every additional vendor on the short list costs the organization 40–60 hours of evaluation effort. Be rigorous.”

The antidote to all three biases is the same: return to your due diligence checklist, validate portfolio and domain expertise through third-party sources and referrals, and let the comparison grid—not relationships—drive the decision.

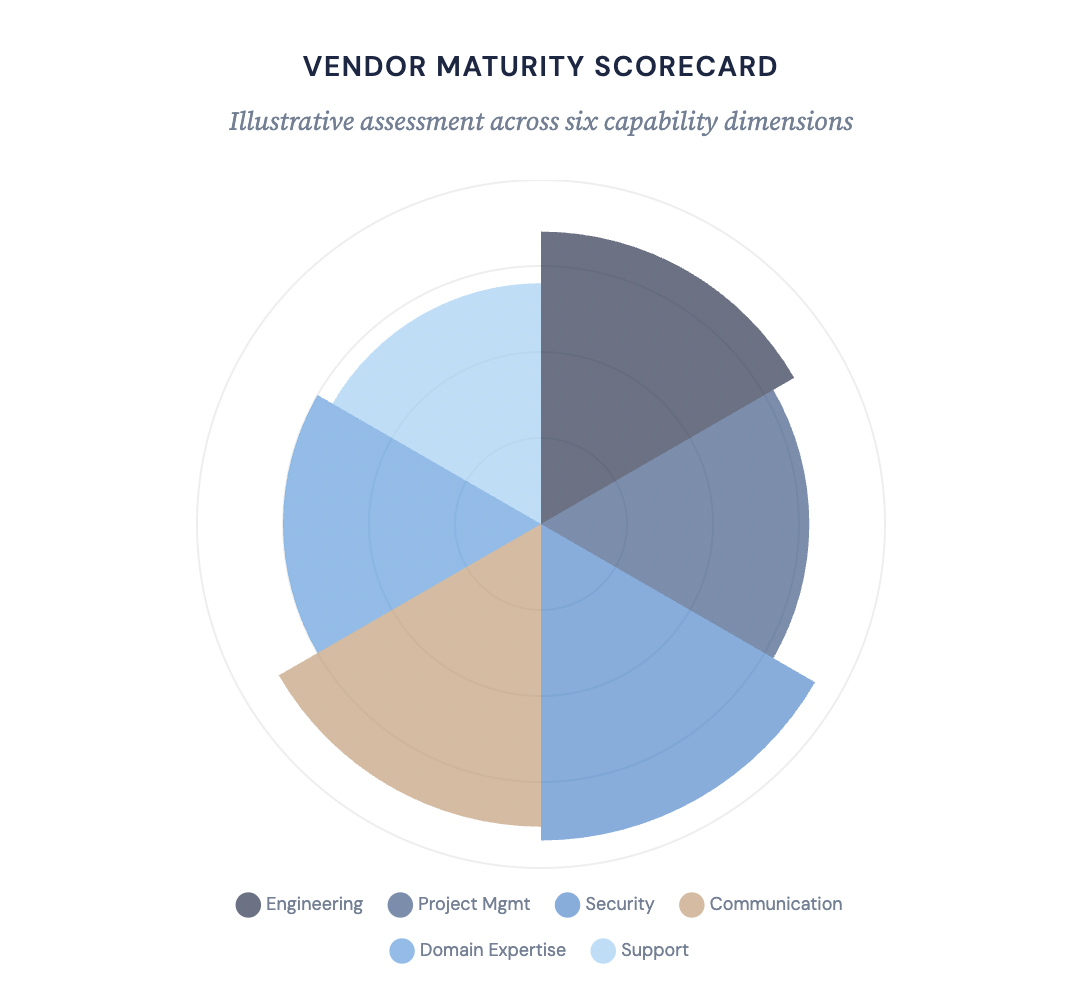

Vendor maturity scorecard: illustrative assessment across six capability dimensions

Evaluating Technical Expertise

A lack of technical skills or reliance on outdated technologies can derail even a well-scoped project. Before engaging commercially, evaluate the vendor’s technical skills, domain expertise, development methodology, and engineering practices.

Technical Skills and Tech Stack

Specialisation and technical skills are the first filter. Companies proficient in the required tech stack work more efficiently—faster development, fewer integration headaches, and potentially lower costs. A firm that lists 25 technologies on its website may have deep expertise in five and passing familiarity with the rest. Identify the technologies they actually specialise in and verify alignment with your needs. Your vendor should demonstrate comparable depth in their claimed areas.

How to evaluate technical skills and tech stack: start by requesting a technical capabilities matrix from the vendor. The software team you choose should be familiar with the latest technologies and modern frameworks—reliance on outdated technologies is a risk to both performance and long-term maintainability. Technical skills matter at every level: front-end, back-end, infrastructure, and data. Ensure the vendor has expertise with the technologies and tools your project requires.

Look for certifications, awards, or recognitions that prove their technical capabilities—AWS, Azure, Google Cloud, or Salesforce certifications provide a useful baseline. Expertise in specific technologies ensures the software performs well and meets users’ expectations and demands.

This distinction between “bench strength” and “your team” is one of the most frequently overlooked dimensions of technical evaluation. Evaluate their technical skills directly—architecture reasoning, code quality, debugging approach, and familiarity with your tech stack. This exercise reveals technical skills and problem-solving ability in a way that interviews alone cannot. For a deeper framework on evaluating individual engineers, see our guide on how to hire software developers.

“We always tell clients: you’re not hiring a company—you’re hiring a team. The team bio matters more than the company bio. If the vendor resists letting you interview their engineers, that’s information. If they comply and the quality is high, that’s even more information.”

Technological Ecosystem

Beyond the specific technologies required for your project, understand the vendor’s overall technological ecosystem—their tools for project management, version control, CI/CD practices, and how they maintain code quality and security. Evaluate their development methodology, testing procedures, and deployment practices: do they follow Agile, Waterfall, or a hybrid development methodology? What are their testing procedures for unit, integration, regression, and performance testing?

Is quality assurance embedded in the development cycle, or bolted on before release? Learn about their quality assurance procedures and how they address bugs or issues in production. Vendors with mature quality assurance processes catch defects early, when they cost 10x less to fix than in production. Ask for defect density metrics from recent engagements as evidence.

Check version control hygiene—branching strategies, pull request workflows, commit discipline. How the vendor manages version control across distributed teams directly affects collaboration quality and code integrity.

Ensure the prospective vendor is proficient in the platforms, software, and technologies you use. Can they operate within your existing CI/CD pipelines, monitoring stack, and deployment infrastructure? Or will integration with your technological ecosystem require significant retooling? The answer determines how quickly the vendor becomes productive and how much hidden cost you absorb during ramp-up.

Domain Expertise and Industry-Specific Experience

Industry-specific experience is a significant advantage. Domain expertise manifests in ways technical skills alone cannot replicate: familiarity with industry-specific data standards (HL7 FHIR in healthcare, FIX in financial services, EDI in supply chain), understanding of workflow patterns and user personas, awareness of the wider technological ecosystem of integration partners, and intuition about where projects in your vertical typically encounter friction.

While many firms offer a broad range of services, finding one that specialises in the specific technologies you need and has deep domain expertise can be a game-changer. A vendor with industry-specific experience will ask better questions during discovery, propose more practical architectures, and anticipate compliance requirements that generalist teams miss entirely.

Pay attention to their technical skills, industry-specific experience, and project management methodologies when assessing fit. A vendor who can speak your industry’s language and reference relevant certifications (HIPAA compliance expertise in healthcare, PCI DSS in payments, SOX in financial reporting) demonstrates domain expertise that goes beyond surface-level familiarity.

“Domain expertise isn’t a nice-to-have—it’s a multiplier. A team with deep domain knowledge will make better architectural decisions, anticipate regulatory pitfalls, and communicate more effectively with your business stakeholders. Domain-experienced vendors deliver 20–30% faster in the first six months because they skip the learning curve that generalist teams absorb at the client’s expense.”

Engineering Practices and Project Management Methodologies

Evaluate the vendor’s project management methodologies: how they run sprints, track velocity, manage backlogs, and handle scope changes. Review their testing procedures and quality assurance practices—mature vendors maintain formalised testing procedures covering unit, integration, regression, security, and performance testing, not ad-hoc checks before release.

“Ask the vendor to walk you through their last production incident. Not the resolution—that’s the easy part. Ask them to describe their detection, escalation, communication, and post-mortem process. That conversation will tell you more about engineering maturity than any certification on their wall.”

Conducting a Technical Proof of Concept

The most reliable way to evaluate technical skills is to see them in action. Trust evidence over assertions—every claim about technical skills, project management methodologies, and quality assurance should be independently verifiable.

Reviewing Portfolio and Case Studies

A software company’s portfolio is more than just a showcase of their past work—it’s a testament to their capabilities, creativity, and domain expertise. Checking client testimonials, case studies, and portfolios can provide valuable insights into how a vendor tackles projects and solves problems. Companies with strong portfolios and domain expertise should be at the top of your list. However, portfolio review requires an investigative mindset—case studies are marketing documents, and their primary purpose is persuasion, not disclosure.

“We tell our clients: don’t just read the case study—interrogate it. A polished PDF with a big logo and a revenue number tells you almost nothing. What was the starting state? What constraints did they operate under? What went wrong, and how did they recover? That’s where the real signal is. Any vendor can describe success. Mature vendors can describe failure and what they learned from it.”

Analysing Past Projects and Project Complexity

Request detailed case studies. Look for case studies or portfolio examples that closely match your project type — whether custom web application development or mobile, industry, and tech requirements. Do your homework regarding the vendor’s past projects—it enables you to better assess their domain-specific experience and success with similar projects. Gauge the vendor’s ability to handle your project by analysing the project complexity and scale of previous engagements.

Vendors with a track record of innovative solutions in past projects will likely bring fresh perspectives and inventive approaches to your project. A portfolio that spans multiple integration patterns, deployment models, and operational contexts demonstrates adaptability that a narrow portfolio does not.

Ask for case studies or examples of how they’ve employed Agile or DevOps strategies in past projects. Scrutinise case studies and past projects to validate the quality of work and customer satisfaction. Past projects serve as a tangible demonstration of the vendor’s ability to deliver quality work.

Quantifying Business Impact

Credible case studies connect technical delivery to measurable business outcomes: revenue uplift, cost reduction, throughput improvement, error rate decrease, time-to-market compression, or customer satisfaction improvement. Be sceptical of case studies that describe only technical deliverables (“built a microservices platform with 47 APIs”) without articulating the business value those deliverables created. Positive feedback and detailed case studies can provide insights into how effectively vendors bridge the gap between technical execution and business results.

“We look for what I call the ‘so what?’ test. The vendor built a real-time analytics platform—so what? It processed 10 million events per day—so what? It reduced fraud detection time from 48 hours to 15 minutes and saved the client $12M annually in fraudulent transaction losses—now we’re talking. That’s a vendor who understands that technology is a means, not an end.”

Client Testimonials, Client Feedback, and References

Don’t rely solely on vendor-supplied case studies. For each company being considered, assess their client testimonials and case studies to help shortlist potential partners. Always seek out client testimonials or case studies to validate their capabilities. Examine client testimonials and case studies to understand their success rate and client satisfaction.

Look at client feedback and case studies on independent review platforms—Clutch, G2, GoodFirms, and Google Business profiles. Companies that have won industry awards or have high ratings on independent review platforms are more likely to be indicators of quality and reliability. Examine their past projects within the industry to gauge their understanding and industry experience.

Portfolio, Domain Expertise, and Industry Experience

Portfolio and domain expertise matter as much as technical skill. When researching potential custom software development companies, check out their portfolios and case studies. A vendor claiming broad industry experience but unable to produce detailed case studies, references, or client testimonials from your sector may be overstating their capabilities.

Evaluate whether the portfolio demonstrates meaningful industry experience in your domain. A company with a proven track record in creating similar solutions is more likely to understand the challenges involved and deliver a successful outcome. Dive deeper into the company’s track record of implementing methodologies relevant to your sector.

Assessing Communication and Collaboration

Technical skill without operational discipline and communicative clarity is a recipe for friction, misalignment, and ultimately project failure. Effective collaboration requires deliberate investment in regular check-ins, structured feedback sessions, proactive issue reporting, and cultural sensitivity across every dimension of the working relationship.

“In twenty years of advisory work, I’ve never seen a vendor engagement fail because the engineers weren’t smart enough. I’ve seen dozens fail because communication broke down—because status reports were performative rather than informative, because bad news travelled slowly, because cultural misalignment created invisible friction that compounded over months.”

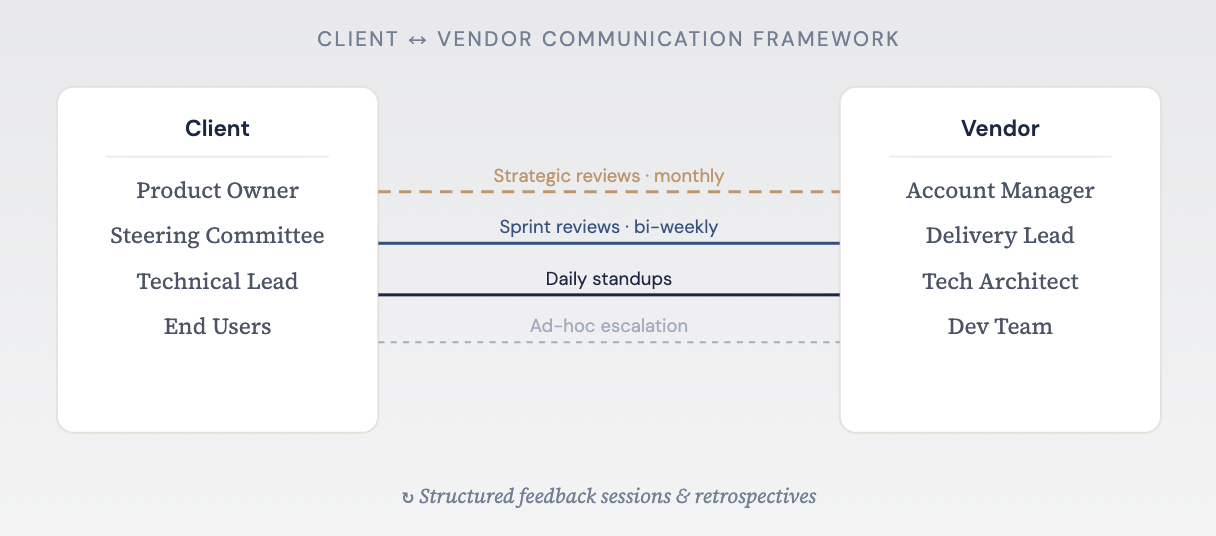

Client ↔ vendor communication framework: structured feedback sessions and retrospectives

Evaluating Responsiveness

During the pre-engagement process, the vendor’s communication behaviour is a leading indicator of what the working relationship will look like. Start by assessing their communication style: is it formal or conversational? Understanding the vendor’s natural communication style—and how adaptable it is—helps predict compatibility with your organisation’s norms. Vendors who practise proactive issue reporting surface problems early, before they escalate. Those who only report issues when asked are reactive, and reactive communication in a vendor relationship is a tax you pay on every sprint.

Evaluate their responsiveness during the sales process. How quickly do they reply to emails? How thoroughly do they answer technical questions? Do they provide structured, thoughtful responses, or generic templates? The sales process is the vendor’s best behaviour—if communication is slow or superficial now, it will not improve after the contract is signed.

Project Management Practices

Evaluate the vendor’s project management practices: how they plan sprints, track progress, manage dependencies, and conduct regular check-ins. Mature project management practices produce predictable delivery; immature ones produce surprises. Ask how they implement agile methodologies in practice—sprint cadence, backlog grooming, velocity tracking, and retrospective discipline. Vendors who follow agile methodologies rigorously will demonstrate structured regular check-ins at every level: daily standups, sprint reviews, and strategic alignment sessions.

Cultural Fit and Working Style

Cultural fit is a frequently underestimated variable that becomes increasingly significant as engagement duration and team integration depth increase.

Assess cultural fit across multiple dimensions: communication directness (does the vendor culture encourage speaking up when they disagree, or defer to client authority even when they see problems?), decision-making speed, tolerance for ambiguity, documentation norms, and feedback culture.

Cultural sensitivity—the ability to recognise, respect, and adapt to differences in working norms, communication conventions, and professional expectations—is a distinct and critical competency, especially in cross-border engagements. Cultural sensitivity goes beyond tolerance; it is the active practice of adjusting communication style, meeting cadence, feedback delivery, and management approach to account for cultural differences. Evaluate whether the vendor demonstrates cultural sensitivity as an organisational capability, not just an individual trait.

For offshore or nearshore engagements, cultural fit and cultural sensitivity assessment takes on additional dimensions. Time zones affect collaboration windows—ensure there is sufficient overlap for real-time regular check-ins and joint problem-solving. Evaluate language skills early: conduct interviews with the actual team members, not just the account manager. Insufficient language skills don’t just slow communication—they introduce systematic misunderstanding risk, where requirements are interpreted differently than intended and defects accumulate silently.

“Cultural fit isn’t about finding a vendor that’s identical to your organisation—it’s about finding one that’s compatible. And cultural sensitivity is the mechanism that makes compatibility work in practice. The best offshore teams I’ve worked with weren’t the ones that mirrored our culture; they were the ones that understood the differences and adapted deliberately.”

Team Integration

Team integration is not automatic; it requires investment from both sides and explicit attention to the structural, technical, and interpersonal dimensions of collaboration.

At the structural level, team integration requires shared tooling: common project management platforms (Jira, Azure DevOps, Asana), shared code repositories with consistent branching strategies, unified CI/CD pipelines, and integrated communication channels (Slack, Teams).

At the interpersonal level, team integration requires intentional relationship building. This includes joint kick-off sessions, shared team norms documentation (a “ways of working” agreement), cross-team pairing on complex tasks, and social touchpoints that build trust and rapport beyond transactional project interactions.

Conducting a Pilot or Discovery Phase

Where feasible, structure the engagement to begin with a paid discovery phase or a limited-scope pilot project. This provides a controlled environment to observe the vendor’s actual working behaviour—communication cadence, quality of deliverables, problem-solving approach, and team chemistry—before committing to a full engagement. It also tests how well the vendor applies agile methodologies in practice, not just in proposals.

The discovery phase serves a dual purpose: it de-risks the technical approach (validating assumptions, identifying integration challenges early) and de-risks the relationship (testing communication patterns, responsiveness, and cultural fit under realistic conditions). Vendors experienced with agile methodologies will treat the discovery phase as a natural extension of their project management practices.

“A two-week paid discovery sprint is the single best risk mitigation tool in vendor selection. It costs a fraction of the total engagement and gives you empirical data that no reference check or case study can replicate. If a vendor refuses to participate in a paid discovery, ask yourself why.”

Structured Feedback Sessions

The presence and quality of structured feedback sessions is one of the clearest indicators of a vendor’s commitment to collaborative improvement. Feedback sessions—whether conducted as sprint retrospectives, monthly relationship reviews, or quarterly strategic alignment meetings—create the mechanism through which communication breakdowns are identified, cultural friction is addressed, and process improvements are implemented before small issues become systemic problems.

Evaluate whether the vendor conducts regular, structured feedback sessions as part of their standard engagement model. Mature vendors come to feedback sessions with data (velocity trends, defect rates, cycle time metrics), facilitate honest conversation about what’s working and what isn’t, and follow through on action items with visible accountability.

Evaluating Escalation and Conflict

Every vendor relationship will encounter friction. The question is not whether conflicts will arise but how they will be resolved. Mature vendor organisations have documented conflict resolution protocols—formal, repeatable processes for identifying, escalating, and resolving disagreements before they damage the working relationship or derail delivery.

Request examples of past conflicts with clients and how their conflict resolution protocols worked in practice. A vendor that claims they’ve never had a significant disagreement with a client is either extraordinarily lucky or insufficiently transparent. Mature vendors can articulate specific conflict scenarios, the root causes, the resolution approach, and the lessons learned.

“Pick a vendor who knows how to fight fair. Every long-term partnership has conflicts—over scope, over quality, over priorities. What matters is whether the vendor has mature conflict resolution protocols and treats those conflicts as problems to solve together or as battles to win.”

Pricing and Contract Models

Pricing structure is not merely a financial consideration—it is a risk allocation mechanism that fundamentally shapes incentives, behaviours, and outcomes throughout the engagement lifecycle. The pricing model you select determines who bears the cost of uncertainty, who benefits from efficiency gains, and how change is managed when requirements evolve. Before entering contract negotiation, demand cost transparency from the outset: every pricing model should clearly define the scope of services, maintenance options, and escalation mechanisms. Any hidden fees should be surfaced before service agreements are signed—not discovered mid-project when timeline and budget constraints are already under pressure. For a comprehensive analysis of cost structures, see our article on custom software development pricing.

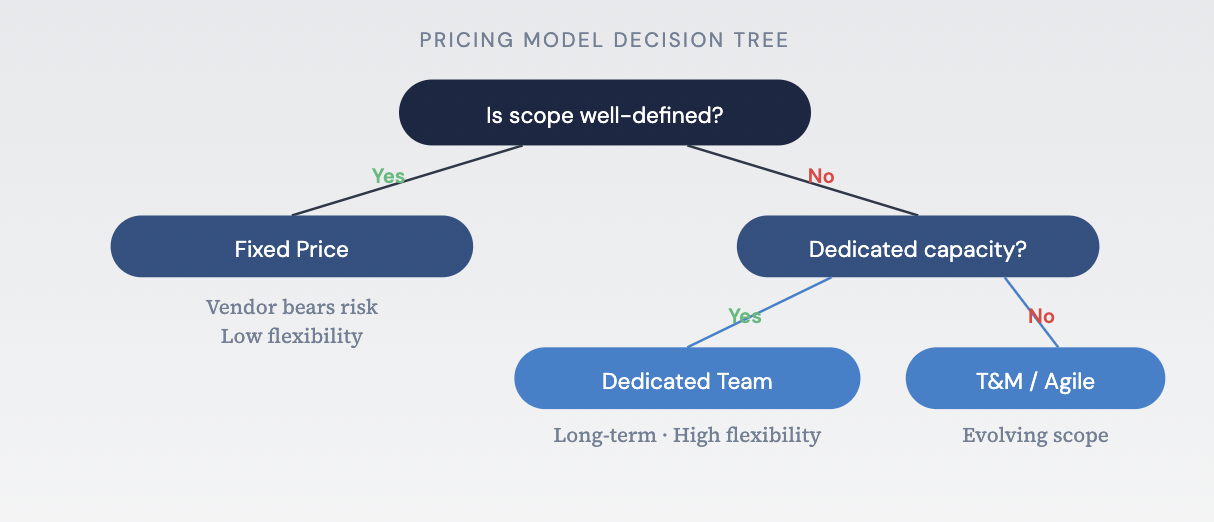

“Every pricing model is a bet. Fixed price is a bet that the scope is well-defined. Time and materials is a bet that governance is strong enough to prevent waste. Dedicated team is a bet that you can manage utilisation effectively. The question isn’t which model is best—it’s which bet you’re most prepared to win.”

Fixed Price Engagements

Fixed price contracts transfer delivery risk to the vendor and are appropriate when scope of services is clearly defined, requirements are stable, and the solution architecture is well-understood. The trade-off is reduced flexibility: change requests become formal contract negotiation exercises, and vendors are incentivised to interpret ambiguity in their favour—delivering the minimum that satisfies the contractual definition, which may not align with the client’s intent.

To mitigate this, build an explicit change management framework into the contract—including escalation mechanisms for disputes and a streamlined process for minor scope adjustments that doesn’t require full commercial renegotiation. Without these escalation mechanisms, even minor changes can stall delivery while both sides negotiate pricing model adjustments.

“Fixed price works beautifully when the scope is genuinely fixed. But in twenty years, I’ve seen maybe a dozen projects where the scope truly didn’t change. For everything else, fixed price creates a fiction of certainty that both parties pay for in different ways.” — Director, Contract Strategy & Negotiation

Time and Materials (T&M)

T&M contracts retain flexibility but require robust governance on the client side. They are well-suited to projects where requirements are expected to evolve or where the solution architecture will emerge through prototyping and experimentation. The risk is cost escalation without commensurate value delivery—mitigated through sprint-level budget tracking, velocity metrics, and regular business value reviews.

Agile billing is a variation that aligns invoicing with sprint cycles and delivered increments rather than hours logged. Agile billing improves cost transparency by tying payments to demonstrable progress and makes budget alignment easier to track against timeline and budget constraints. When structuring service agreements for agile billing, specify the mechanism clearly: per-sprint fixed fee, hourly with sprint caps, or outcome-linked increments. The choice affects both cost transparency and the vendor’s incentive structure.

Dedicated Team Model

This model provides continuity, deep domain immersion, and high flexibility but demands strong internal product ownership and backlog management. The quality of outcomes depends heavily on how you hire and assess the software developers assigned to your team. Budget alignment is critical: ensure your internal capacity to direct the team justifies the vendor’s staffing costs. Without budget alignment between what you spend and the value you extract, a dedicated team quietly becomes overhead rather than an asset.

“Dedicated teams are the most powerful engagement model when they work—and the most insidious when they don’t. The success factor isn’t the vendor’s team quality; it’s the client’s ability to provide clear direction, a prioritised backlog, and meaningful performance feedback. Without a strong internal product owner, a dedicated team is just an expensive body shop.” — Senior Manager, Engagement Models & Delivery

Hybrid and Outcome-Based Models

Increasingly, organisations are exploring hybrid models that combine elements of fixed price and T&M—for example, a fixed price discovery phase followed by T&M delivery, or a T&M engagement with a shared risk/reward mechanism tied to business outcomes. Outcome-based pricing, where the vendor’s compensation is partially tied to defined business metrics, represents the frontier of vendor engagement models. These models require sophisticated measurement frameworks, mutual trust, and contractual precision to execute successfully.

Contract Negotiation and Service Agreements

Approach contract negotiation as the design of the governance framework for the entire relationship—not a purchasing formality. Service agreements should define scope of services, pricing model, payment terms, intellectual property ownership, warranty periods, maintenance options, and exit clauses. Maintenance options deserve particular attention: post-go-live support, bug-fix SLAs, and long-term maintenance options are frequently underspecified during contract negotiation and become sources of dispute later.

Hidden Fees and Cost Transparency

Scrutinise proposals for hidden fees that may not be immediately visible: environment and infrastructure provisioning, third-party licence fees, data migration complexity surcharges, post-go-live hypercare periods, and knowledge transfer activities. Hidden fees erode budget alignment and undermine trust. Demand cost transparency—ask for the fully loaded number including all hidden fees, and verify it against your timeline and budget constraints.

Cost transparency also means understanding how the pricing model behaves under change. What happens when scope of services expands by 20%? Are there hidden fees triggered by timeline extensions or additional contract negotiation rounds? Ensure these scenarios are addressed explicitly in service agreements so that timeline and budget constraints are protected from the start.

“The proposal price is not the project price. It’s the starting point of a cost model that extends well beyond go-live. We’ve seen clients blindsided by six-figure annual licensing costs that were buried in a technical appendix, or by ‘optional’ but practically essential services that weren’t included in the base proposal. Ask for the fully loaded number—and verify it.”

Pricing model decision tree: choosing the right model based on scope definition and capacity needs

Security and Intellectual Property Considerations

In an era of escalating regulatory scrutiny and increasingly sophisticated threat landscapes, data security and IP governance are non-negotiable dimensions of vendor evaluation. A vendor’s security posture—their security certifications, security protocols, data encryption standards, and incident response plan—is a reflection of organisational maturity that will directly impact your exposure profile. Equally, intellectual property rights must be defined with precision before any code is written. Confidentiality, data security, and clear intellectual property rights form the foundation of trust in any vendor relationship.

“We’ve seen deals unwind six months into delivery because IP ownership was addressed with a single paragraph in an MSA. Intellectual property rights should be as detailed and specific as the technical architecture. Ambiguity in this area isn’t just a legal risk—it’s an existential business risk.”

Confidentiality and Security Protocols

Evaluate the vendor’s confidentiality practices and security protocols: access controls, employee onboarding and offboarding procedures, data handling protocols for client-sensitive material, and clean-desk/clean-screen policies. Strong confidentiality practices are the foundation of data security—without them, security certifications are meaningless paperwork.

For engagements involving particularly sensitive information (trade secrets, pre-announcement product plans, M&A-related data), consider supplementary confidentiality measures: named-individual access restrictions, segregated project environments, audit trails and monitoring of who accesses what data and when, and periodic access audits. Ensure that audit trails and monitoring cover not just production systems but also development and staging environments, where data security is often weaker.

Intellectual Property Rights and Licensing

Define intellectual property rights with surgical precision. Vague or boilerplate language in the MSA creates latent risk that manifests at the worst possible moment—typically when the client wishes to engage a different vendor, sell the business, or license the technology.

Project-created IP includes custom code, configurations, designs, and documentation developed specifically for your engagement. In most cases, the client should retain full intellectual property rights over code that represents core business logic or competitive differentiation—this is non-negotiable. Clearly distinguish between foreground IP (created for your project, where intellectual property rights should vest with the client) and background IP (the vendor’s pre-existing frameworks, libraries, and tools, which are typically licensed rather than transferred).

Address open-source components with equal specificity: which libraries are incorporated, under what licences (MIT, Apache 2.0, GPL, LGPL—each with materially different implications for intellectual property rights), and what obligations those licences impose on the client’s use and distribution of the delivered software.

“Intellectual property rights are one of those areas where the cost of getting it right during contract negotiation is trivial compared to the cost of getting it wrong after delivery. Spend the money on a technology transactions attorney who understands software IP.”

Security Certifications and Regulatory Compliance

Verify the vendor’s compliance posture against applicable standards and security certifications: SOC 2 Type II, ISO 27001, HIPAA, PCI DSS, GDPR, CCPA, and any industry-specific regulations. Security certifications such as ISO 27001 and SOC 2 demonstrate that the vendor has invested in systematic data security processes—but security certifications alone are not sufficient. For healthcare engagements, verify that the vendor can execute a Business Associate Agreement and that their security protocols meet HIPAA requirements in practice, not just on paper.

Confirm compliance with data residency and sovereignty requirements where cross-border data transfer is involved. The regulatory landscape is evolving rapidly—Schrems II implications, GDPR enforcement trends, HIPAA audit intensification—and the vendor must demonstrate the capability to adapt their security protocols accordingly.

“Compliance isn’t a checkbox—it’s a capability. A SOC 2 Type II report tells you what was true during the audit period. What matters more is whether the vendor has the processes, personnel, and culture to maintain that posture continuously.”

Data Security, Data Encryption, and Incident Response

Evaluate the vendor’s data security practices across the full development and operations lifecycle. Data encryption should be non-negotiable: AES-256 at rest, TLS 1.2+ in transit, and proper key management through a dedicated secrets management solution. Weak data encryption or inconsistent security protocols are disqualifying—if a vendor cannot demonstrate robust data encryption practices, their other security certifications should be viewed with scepticism.

Data security extends beyond encryption to include secure coding practices, dependency management, and secrets handling. These details separate vendors with genuine data security maturity from those with surface-level compliance. Confirm the existence of a documented incident response plan that specifies notification timelines, escalation procedures, containment protocols, and post-incident remediation steps.

Supply Chain Security and Secure Data Transfer Methods

Modern software development involves extensive use of third-party libraries, frameworks, and services—each a potential attack vector. Evaluate how the vendor manages supply chain risk: dependency scanning, SBOM (Software Bill of Materials) generation, and secure data transfer methods between development, staging, and production environments.

Secure data transfer methods matter at every stage: how does the vendor move code from development to production, and how is client data protected during onboarding and testing?

“The attack surface of a modern application isn’t just the code your vendor writes—it’s every dependency in the stack, every CI/CD tool in the pipeline, and every cloud service in the architecture. A vendor who can’t articulate their supply chain security posture is a vendor who doesn’t fully understand their own risk profile.”

Red Flags and Risk Mitigation

Software project failure statistics paint a sobering picture of what can go wrong without proper planning and due diligence. These are not abstract numbers; they represent billions of dollars in destroyed value and thousands of organisational setbacks that were, in most cases, preventable through proper due diligence, disciplined risk management, and early identification of warning signs. The importance of due diligence when choosing a service provider cannot be overstated—recognising red flags before the contract is signed is exponentially less costly than discovering them after delivery has begun and switching costs are high.

“In our practice, we maintain an internal taxonomy of vendor red flags drawn from hundreds of engagements. The single most reliable predictor of project distress? The vendor that says yes to everything. A mature partner pushes back, asks hard questions, and tells you what you need to hear—not what you want to hear. Proper due diligence catches these patterns before they become contractual obligations.”

Unrealistic Timelines and Effort Estimates

If a vendor’s proposed timeline is significantly shorter than the consensus estimate from other qualified bidders—more than 25% below the median—treat it as a risk signal rather than a competitive advantage. Unrealistic estimates typically mean the vendor either misunderstood the scope or plans to recover margin through change orders. Effective risk management requires flagging any bid more than 20% below the median for additional due diligence.

“An unrealistically low timeline is a vendor telling you they’re planning to make up the difference in change orders. Our risk management practice flags any bid that’s more than 20% below the median as requiring additional due diligence—and in two-thirds of cases, that additional scrutiny reveals material gaps in the proposal.”

Financial Reviews and Commercial Red Flags

Financial reviews and contracts work in tandem to mitigate the risk of financial loss, while a security audit could prevent a costly data breach. Conduct financial reviews of the vendor’s business health—revenue stability, client concentration, cash reserves, and litigation history. A vendor under financial pressure may cut corners on staffing, reduce investment in quality assurance and testing methods, or deprioritise your project in favour of higher-margin work. Financial reviews are a core component of due diligence that many organisations skip—and later regret.

Lack of Relevant Experience Disguised as Versatility

One of the most common—and most costly—red flags is a vendor’s lack of relevant experience masked by broad claims of versatility. If a vendor cannot demonstrate experience with projects of similar size and complexity, it likely lacks the necessary expertise. The antidote is specificity: ask for exact project names, exact team members, and exact outcomes. If the vendor cannot produce concrete evidence, treat the lack of relevant experience as a disqualifying factor rather than a minor concern.

“A lack of relevant experience is the red flag vendors are most skilled at concealing. They’ll reframe tangentially related work as directly applicable and present architectural approaches that miss the domain nuances that make or break production systems. The antidote is specificity—generalities are where a lack of relevant experience hides.”

Questionable Business Practices

Questionable business practices are the red flags that due diligence is specifically designed to uncover—and the ones that vendors work hardest to conceal. Signs of questionable business practices include avoiding direct answers, changing terms frequently, or not providing an NDA right away. Other examples include inflating team sizes with engineers who won’t be assigned to your project, misrepresenting subcontractor relationships, and providing curated references while hiding problematic engagements.

“Questionable business practices are the red flags that due diligence is specifically designed to uncover. The best protection is triangulation: verify claims independently, speak to references the vendor didn’t curate, and check public records. If something doesn’t add up during the evaluation, it won’t improve after the contract is signed.”

Quality Assurance and Testing Methods

A vendor’s approach to quality assurance and testing methods reveals their engineering maturity and directly predicts the defect rate of delivered software. This includes functional testing, performance testing, and security testing. Weak quality assurance and testing methods across any of these dimensions should be treated as a serious risk management concern.

A vendor that treats functional testing as a final-stage activity will deliver software with defects that should have been caught weeks earlier, increasing remediation cost and eroding client confidence. Mature vendors embed functional testing throughout the development cycle with automated test suites that run on every build. Performance testing should simulate production traffic patterns, not just synthetic benchmarks—vendors who skip performance testing are shipping risk directly to the client’s production environment.

By doing your due diligence on security testing, you’re not just ticking off a compliance checklist—you’re fortifying your project against a myriad of risks that could potentially derail it. Security testing should include static analysis, dynamic analysis, dependency scanning, and penetration testing. Be it performance issues, security vulnerabilities, or usability concerns, timely identification and resolution through rigorous quality assurance and testing methods can save you significant time, money, and reputational damage.

Security Audit Findings and Risk Exposure

A vendor’s security posture deserves dedicated scrutiny within the red flags framework—not just as a compliance checkbox, but as a risk management signal. By doing your due diligence on security, you’re fortifying your project against risks that could derail it. Request the vendor’s most recent security audit results. A clean security audit is encouraging; a security audit with findings that were promptly remediated demonstrates maturity; a vendor that refuses to share security audit results is a vendor you should not trust with your data.

Evaluate how the vendor manages security vulnerabilities: what is their process for identifying, prioritising, and remediating security vulnerabilities? How quickly do they patch critical security vulnerabilities once disclosed? Unmanaged security vulnerabilities in the vendor’s codebase become your security vulnerabilities the moment their software runs in your environment. Timely identification through security testing and regular security audit practices prevents both financial and reputational damage.

Project Success Rate as a Composite Risk Indicator

While individual red flags provide specific warning signals, a vendor’s overall project success rate—the percentage of engagements delivered within acceptable parameters of scope, timeline, budget, and quality—serves as a composite risk indicator that integrates multiple dimensions of delivery capability into a single metric. Ask for the vendor’s project success rate over the last 24 months and how they define success. A low project success rate, combined with an unwillingness to share the data, is a significant red flag.

Building a Structured Risk Assessment Framework

Rather than evaluating red flags in isolation, construct a formal risk assessment matrix that scores each vendor across 15–20 risk dimensions, weights those dimensions according to your project’s specific risk profile, and produces a composite risk score that can be compared across candidates.

Risk dimensions should include: delivery risk (estimation accuracy, project success rate, methodology maturity), technical risk (architecture quality, stack proficiency, quality assurance and testing methods—including the rigour of functional testing, performance testing, and security testing), people risk (team stability, key-person dependency, lack of relevant experience), commercial risk (pricing model, financial reviews findings, hidden costs), security risk (security audit results, security vulnerabilities management, incident response maturity), and relationship risk (communication quality, cultural fit, conflict resolution capability).

Some risks are acceptable with proper mitigation; others are disqualifying regardless of the vendor’s other strengths. The output is not a single “pass/fail” determination but a nuanced risk profile that enables informed decision-making.

“Risk management in vendor selection isn’t about finding a zero-risk option—that doesn’t exist. It’s about understanding each vendor’s specific risk profile through comprehensive due diligence—financial reviews, security audits, quality assurance evaluation, reference validation—and comparing those profiles against your risk tolerance.”

Post-Deployment Support and Maintenance

The vendor’s commitment to post-launch support is as critical as their delivery capability, and in many ways more consequential for long-term value realisation. Systems are not static; they operate in dynamic environments where user behaviour, business requirements, regulatory landscapes, and technology platforms evolve continuously. Without ongoing maintenance, software can quickly become obsolete, vulnerable to security risks, and unable to support evolving business needs. Post-launch support encompasses bug fixes, ongoing updates, performance monitoring, security patch management, and testing and maintenance services—all governed by clearly defined service level agreements (SLAs) and maintenance plans.

These activities are collectively known as software maintenance services: all post-deployment work aimed at ensuring the software remains functional, secure, and aligned with business needs. This includes bug fixes, performance enhancements, ongoing updates, security patching, and adapting to new environments. Post-launch support is the safety net and growth engine that keeps your software relevant, stable, and secure long after launch day.

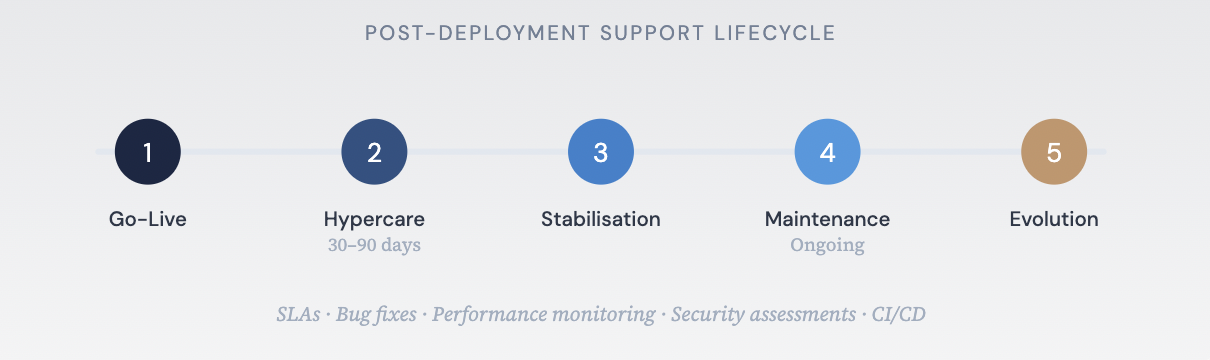

“The first 90 days after go-live are where vendor relationships are truly tested. That’s when edge cases surface, when user behaviour deviates from assumptions, and when the production environment reveals things that staging never could. A vendor that treats go-live as the end of its obligation hasn’t internalised what it means to be a partner. And the client that didn’t negotiate post-go-live terms is a client that’s about to learn an expensive lesson.”

Service Level Agreements (SLAs) and Support Tiers

Define service level agreements (SLAs) with precision and specificity, not aspirational language. Service level agreements (SLAs) are the contracts that define the expectations, methods, and costs associated with post-launch support and testing and maintenance services. Key SLA dimensions include response time by severity level, availability guarantees expressed as uptime percentages, and escalation paths with named contacts at each level.

Distinguish between L1 support (triage and routing), L2 support (technical diagnosis by domain-specific engineers), and L3 support (engineering-level remediation requiring code changes). Understand which tiers the vendor staffs with dedicated support teams and which are subcontracted. Dedicated support teams who understand the architecture and codebase are quicker at identifying bugs and deploying bug fixes with minimal downtime. Ensure that service level agreements (SLAs) include penalties for non-compliance that create genuine accountability, not token gestures.

Maintenance Plans, Ongoing Updates, and Update Cadence

Clarify the vendor’s maintenance plans and approach to ongoing updates across several dimensions. Comprehensive maintenance plans should cover security patch management (what is the SLA for applying critical security patches for zero-day vulnerabilities, and what is the standard cadence for routine security patch management?), dependency updates (how does the vendor manage ongoing updates to third-party libraries and platform components?), framework version migration (when a major framework version is released, what is the vendor’s process for evaluating, planning, and executing the migration?), and infrastructure evolution (how does the vendor adapt the system as cloud platforms deprecate services or introduce new capabilities?).

Maintenance plans should specify the cadence of ongoing updates—both scheduled releases and emergency patches—including rollback procedures if a patch introduces new issues. Establish whether maintenance plans and ongoing updates are included in the base engagement fee or priced as a separate retainer.

Even with rigorous testing, no software is entirely free of bugs. Bug fixes and code corrections to bring the software into conformity with operating specifications should be covered under maintenance plans. All bugs should be triaged to identify their level of risk, impact, severity, and reach, and bug fixes should be prioritised accordingly. Define how bug fixes are delivered: as hotfixes for critical issues or as part of regular ongoing updates depending on urgency.

Post-deployment support lifecycle: from go-live through hypercare, stabilisation, maintenance, and evolution

Hypercare and Stabilisation Period

The period immediately following go-live—typically 30–90 days, referred to as the “hypercare” or stabilisation period—requires elevated post-launch support levels and accelerated response times. During this period, the vendor should provide enhanced performance monitoring, proactive issue identification, and priority access to the delivery team rather than the support organisation alone. Dedicated support teams should be assigned during hypercare, with named individuals who have direct knowledge of the system architecture and codebase.

Define the hypercare period explicitly in the contract, including the enhanced service level agreements (SLAs) that apply, the staffing model for dedicated support teams, the criteria for exiting hypercare and transitioning to standard post-launch support, and the cost model. During hypercare, performance monitoring should be continuous—tracking response times, error rates, resource utilisation, and user behaviour patterns against pre-launch baselines.

Performance Monitoring, Security Assessments, and Data Protection

Post-launch support must include robust performance monitoring to ensure the system continues to meet its operational targets. Performance monitoring should track application response times, database query performance, API latency, infrastructure resource utilisation, and error rates—with automated alerting when metrics breach defined thresholds. Effective performance monitoring identifies degradation before users notice it, enabling proactive remediation rather than reactive firefighting.

Security assessments should be conducted on a regular cadence—not just at initial deployment but throughout the system’s operational life. The results of security assessments should feed directly into security patch management priorities and maintenance plans, creating a continuous feedback loop between assessment and remediation.

Data protection is a continuous obligation, not a one-time implementation. Post-launch support should ensure that data protection measures—encryption, access controls, backup procedures, and retention policies—remain effective as the system evolves. As business needs change and new integrations are added, data protection configurations must be reviewed and updated. The vendor’s testing and maintenance services should include periodic data protection audits to verify that controls remain effective and compliant.

Continuous Integration and Continuous Deployment (CI/CD)

A mature post-launch support model leverages continuous integration and continuous deployment (CI/CD) pipelines to deliver bug fixes, ongoing updates, and security patches efficiently and reliably. Continuous integration and continuous deployment (CI/CD) practices ensure that every change—whether a bug fix, a security patch, or a feature enhancement—is automatically tested, validated, and deployed through a consistent, repeatable process. Without CI/CD, maintenance releases become manual, error-prone, and slow—increasing the risk that bug fixes introduce new defects and that security patches are delayed.

Evaluate the vendor’s CI/CD maturity: do they maintain automated testing and maintenance services as part of their deployment pipeline? Are bug fixes validated through automated regression suites before reaching production? The vendor’s testing and maintenance services should include automated functional, integration, and performance tests that run as part of every CI/CD cycle.

Knowledge Transfer and Documentation

A comprehensive knowledge transfer should be a contractual deliverable, not an afterthought. This includes complete technical documentation, architecture decision records that capture not just what was built but why, operational runbooks, environment configuration guides, and recorded walkthroughs. The standard is simple: could your internal team—or a different vendor—operate and extend this system without further assistance?

Knowledge transfer is not a one-time event; it is a process that should occur throughout the engagement. Embed knowledge transfer checkpoints into the delivery milestone structure so that institutional knowledge accumulates progressively rather than being compressed into a final session.

“The most expensive knowledge transfer is the one that happens after the vendor’s team has already rolled off. Build knowledge transfer into the delivery cadence, not the closeout.”

Scalability Planning and Product Evolution

Discuss the vendor’s willingness and capacity to support the system as it scales—increased transaction volumes, additional integrations, expanded user bases, new geographic deployments, and evolving business requirements. Evaluate whether the vendor has a product mindset (continuous improvement, roadmap planning, proactive performance monitoring and optimisation) or a project mindset (deliver the defined scope and disengage). A product-oriented vendor will proactively identify opportunities for improvement, suggest architectural optimisations, and align their testing and maintenance services with the client’s evolving business needs.

Exit Strategy and Vendor Transition Planning

No matter how successful the partnership, prudent governance demands a documented exit strategy. Define the conditions and process for transitioning to internal teams or an alternative vendor, including data export procedures and data protection during transition, code repository access and transfer (including full commit history), environment handover protocols, knowledge transfer requirements, a transition support period, and intellectual property confirmation. Ensure data protection standards are maintained throughout the transition period.

“The best vendor relationships are the ones where both parties know exactly how the engagement could end—and neither wants it to. That clarity creates accountability, and accountability creates trust.” — Senior Partner, Technology Strategy & Advisory

Continuous Improvement and Relationship Governance

Post-launch support is not a static arrangement. Establish a governance framework that includes regular relationship reviews covering SLA performance, incident trends including bug fixes volume and resolution times, ongoing updates pipeline status, security assessments findings, and performance monitoring trends. These reviews should evaluate whether maintenance plans remain fit for purpose, whether dedicated support teams are appropriately scaled, and whether testing and maintenance services are delivering the expected quality outcomes.

“The vendors who create the most long-term value aren’t the ones who deliver a flawless product. They’re the ones who build a relationship infrastructure that enables continuous improvement: regular retrospectives, transparent performance metrics, and a shared commitment to getting better.” — Managing Director, Strategic Vendor Management & Governance

FAQ

How do I define my project needs before choosing a software development company?+

Before approaching any vendor, clearly define your project objectives, scope, budget, timeline, and expected outcomes. Draft a requirements specification that captures both functional needs and technical requirements, agree on measurable goals, and ensure stakeholder communication is structured from day one. A well-defined scope prevents misaligned expectations, scope creep, and vendor disputes later in the engagement.

What should I look for when evaluating a vendor’s technical expertise?+

Evaluate the vendor’s technical skills and tech stack alignment, domain expertise, development methodology, engineering practices, and quality assurance processes. Request a technical capabilities matrix, verify certifications such as AWS, Azure, or Google Cloud, and assess their CI/CD practices, version control hygiene, and testing procedures. The most reliable way to evaluate technical skills is through a paid technical proof of concept.

What are the main pricing models for software development engagements?+

The three primary pricing models are fixed price (best when scope is clearly defined and stable), time and materials (best when requirements are expected to evolve), and dedicated team (best for long-term engagements requiring continuity and deep domain immersion). Hybrid and outcome-based models are also emerging. Each model allocates risk differently — the right choice depends on your project’s certainty of scope, internal governance capacity, and tolerance for cost variability.

How do I assess a vendor’s communication and collaboration capabilities?+

Evaluate responsiveness during the sales process, project management practices such as sprint cadence and backlog management, cultural fit across dimensions like communication directness and decision-making speed, and the vendor’s approach to team integration. Where feasible, begin with a paid discovery phase or pilot project to observe actual working behaviour before committing to a full engagement.

What security and intellectual property considerations should I address?+

Verify the vendor’s security certifications (SOC 2 Type II, ISO 27001), data encryption standards (AES-256 at rest, TLS 1.2+ in transit), incident response plans, and supply chain security practices. Define intellectual property rights with precision — distinguish between foreground IP (which should vest with the client) and background IP (typically licensed). Address open-source component licensing and ensure confidentiality protocols cover development and staging environments.

What are the biggest red flags when choosing a software development company?+

Key red flags include unrealistic timelines more than 25% below the median estimate, a lack of relevant experience disguised as broad versatility, questionable business practices such as avoiding direct answers or changing terms frequently, weak quality assurance and testing methods, refusal to share security audit results, and financial instability. The single most reliable predictor of project distress is a vendor that says yes to everything without pushing back.

What should post-deployment support and maintenance include?+

Post-deployment support should include clearly defined SLAs with response times by severity level, a hypercare period of 30–90 days after go-live, ongoing maintenance plans covering security patches and dependency updates, continuous performance monitoring, regular security assessments, CI/CD pipelines for delivering bug fixes and updates, comprehensive knowledge transfer, and a documented exit strategy for vendor transition planning.

Conclusion

Vendor selection is not a procurement exercise — it is a strategic decision that shapes your organisation’s technology trajectory, operational capabilities, and competitive position for years to come. The framework presented in this guide is designed to be comprehensive but not prescriptive: every organisation must calibrate the depth and rigour of each evaluation dimension to the scale, complexity, and strategic significance of the specific engagement.

The organisations that consistently achieve the best vendor selection outcomes share three characteristics. Understanding what a software development company does across the full delivery lifecycle provides essential context for this evaluation. These organisations share three characteristics: they invest in internal clarity before engaging the market, they evaluate vendors against defined criteria rather than intuition, and they treat the vendor relationship as a partnership that requires ongoing governance — not a transaction that concludes at go-live.

Technology delivery is inherently uncertain. The goal of rigorous vendor selection is not to eliminate uncertainty but to choose a partner whose capabilities, culture, and commitment equip them to navigate that uncertainty alongside you — and to structure the relationship in a way that keeps both parties aligned when the inevitable surprises arise.

“After hundreds of vendor selection engagements, the pattern is clear: the clients who do the best aren’t the ones who find the perfect vendor — because the perfect vendor doesn’t exist. They’re the ones who do the work upfront, ask the right questions, build the right governance structure, and then commit to making the relationship succeed. Vendor selection is 30% choosing the right partner and 70% being the right client.” — Senior Partner, Technology Strategy & Advisory