In an era where digital capability increasingly determines competitive positioning and enterprise valuation, legacy software systems represent one of the most consequential—yet frequently underestimated—factors in deal economics and long-term business performance. Organizations carrying aging technology portfolios face a compounding cost curve: every quarter of deferred modernization erodes margins, amplifies operational risk, and narrows the window for strategic pivots.

Understanding Legacy Systems

A legacy system, in its simplest definition, is any software or hardware environment that continues to serve critical business functions despite being built on outdated technology. But that definition barely scratches the surface of what these systems mean for organizations financially.

Defining Characteristics

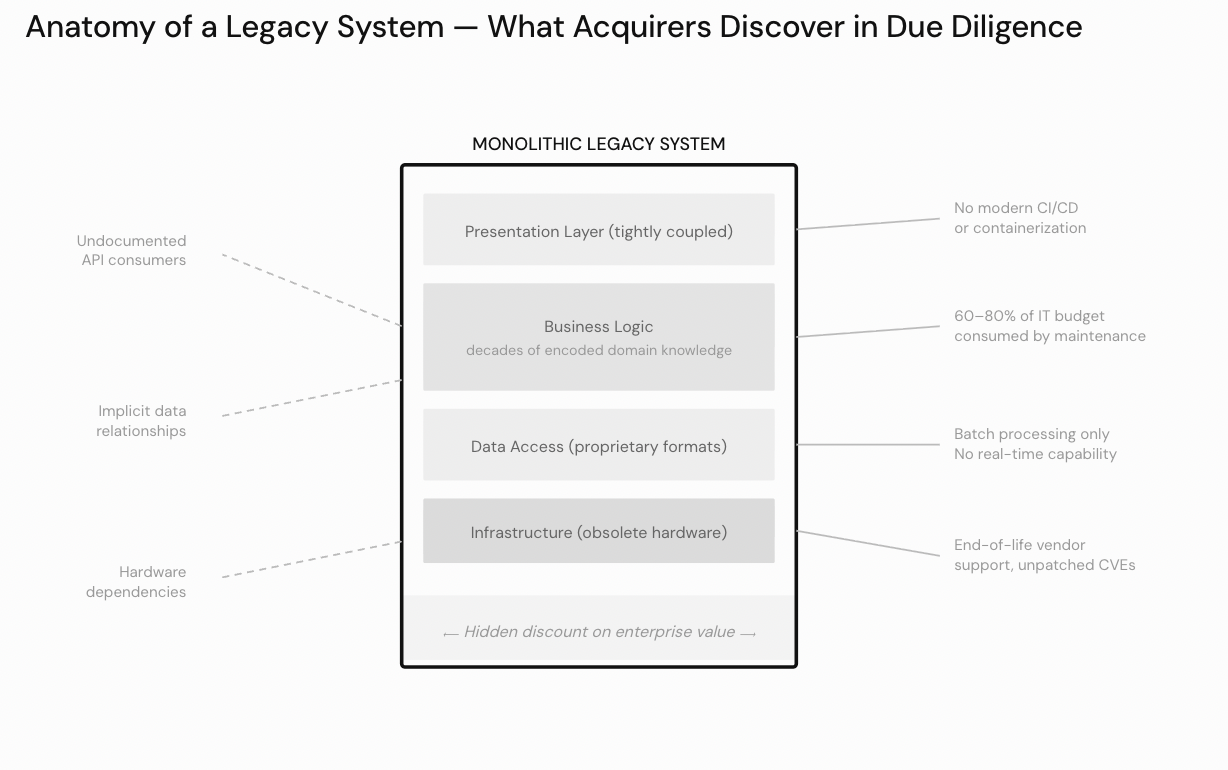

Legacy systems typically share several defining characteristics: monolithic architecture that resists incremental change, reliance on aging programming languages or frameworks for which skilled talent is increasingly scarce, tight coupling between business logic and infrastructure layers, and minimal or nonexistent API exposure. Many of these systems were originally built on mainframe platforms, designed for a world of batch processing and static workflows rather than the real-time, cloud-native environments that modern business demands. Their architecture predates concepts like containerization, distributed systems, and service-oriented design—which means adapting them to modern paradigms requires fundamental rethinking, not just incremental adjustments.

The Technical Debt Paradox

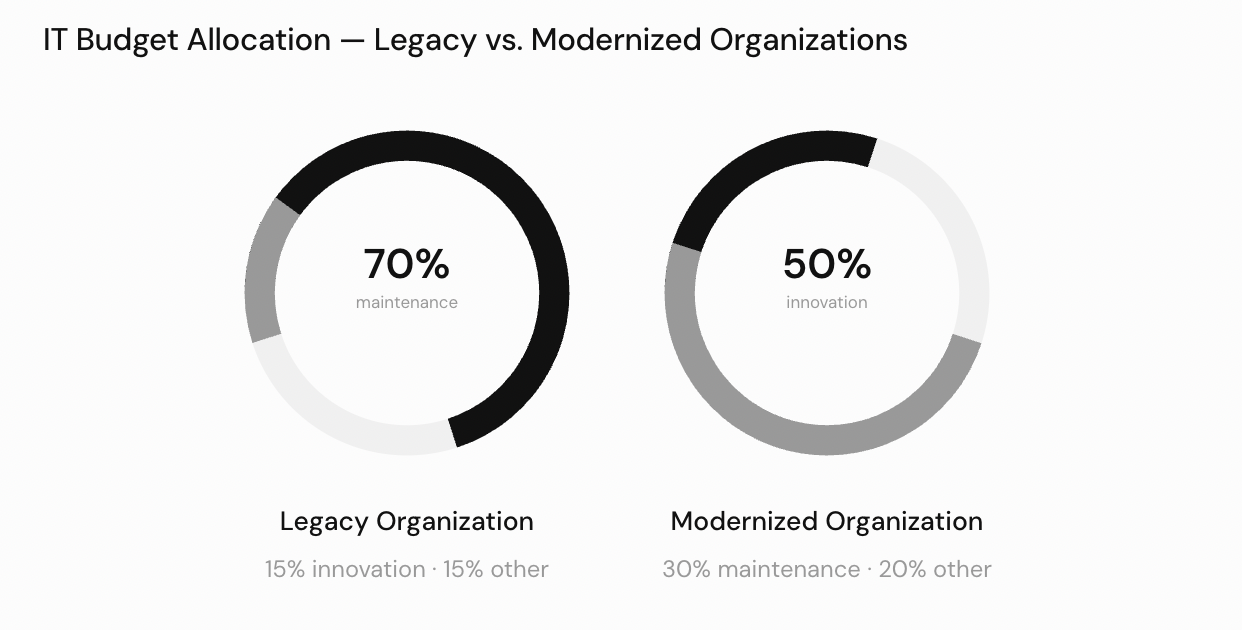

What makes legacy systems particularly challenging from a financial advisory standpoint is the paradox they present. They often encode decades of institutional knowledge and proven business logic—which makes them irreplaceable in the short term—while simultaneously creating mounting technical debt that suppresses the organization’s ability to innovate, scale, or respond to market shifts. The maintenance costs associated with these systems tend to consume a disproportionate share of IT budgets, often 60 to 80 percent, leaving little room for strategic investment. Every dollar spent keeping a legacy system running is a dollar not spent on capabilities that could generate new revenue or create competitive advantage.

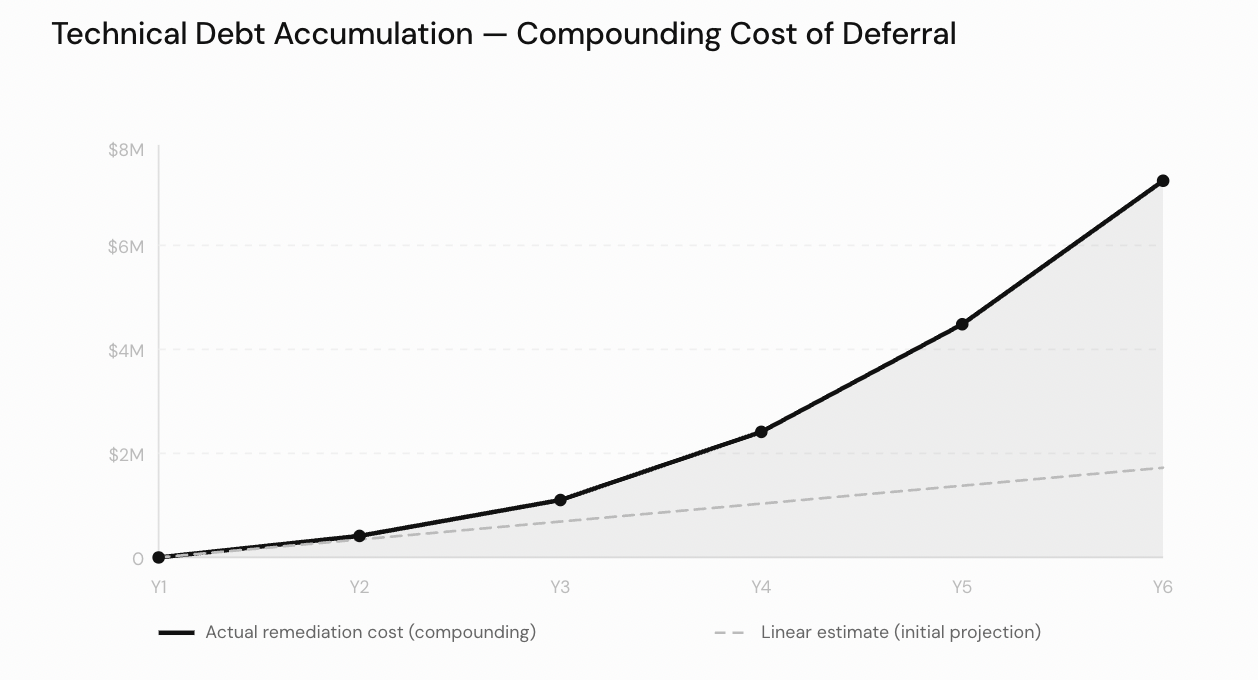

Technical debt does not grow linearly — actual remediation cost compounds as deferred decisions stack, diverging sharply from initial projections by Year 4.

Incompatibility with Modern Ecosystems

The absence of DevOps pipelines, containerization capabilities, and modern APIs makes legacy systems increasingly incompatible with the digital ecosystems that partners, customers, and regulators expect. Modern business processes depend on real-time data exchange, automated workflows, and elastic scalability—none of which are native capabilities of most legacy environments. As the gap between legacy capabilities and modern expectations widens, the integration costs required to bridge it grow exponentially.

Legacy organizations spend 70% of their IT budget on maintenance, leaving 15% for innovation. Modernized organizations invert that ratio — directing 50% toward new capability.

Implications for Due Diligence and Valuation

For acquirers evaluating a target company, the state of legacy systems is a critical due diligence factor. A business running on well-maintained but outdated technology may appear operationally sound today, yet harbor significant hidden liabilities in the form of refactoring or rearchitecting costs that will inevitably come due. Sophisticated buyers model these costs explicitly, discounting enterprise value by the estimated investment required to bring the technology stack to a modern, sustainable state. Conversely, sellers who proactively modernize before going to market often capture a meaningful valuation premium, as buyers pay for reduced integration risk and accelerated synergy realization.

Legacy software modernization sits at the intersection of technology strategy and financial value creation. For financial advisors, deal professionals, and the executives they serve, understanding the full landscape—from system fundamentals through strategic options to economic implications—is essential for making informed decisions that protect and enhance enterprise value. The organizations that approach modernization with financial discipline, strategic clarity, and realistic expectations about the complexity involved will be the ones that successfully convert their legacy technology burden into a platform for sustainable growth.

Anatomy of a legacy system as seen in due diligence — monolithic layers, undocumented APIs, and hardware dependencies compound into a hidden discount on enterprise value.

Challenges and Risks

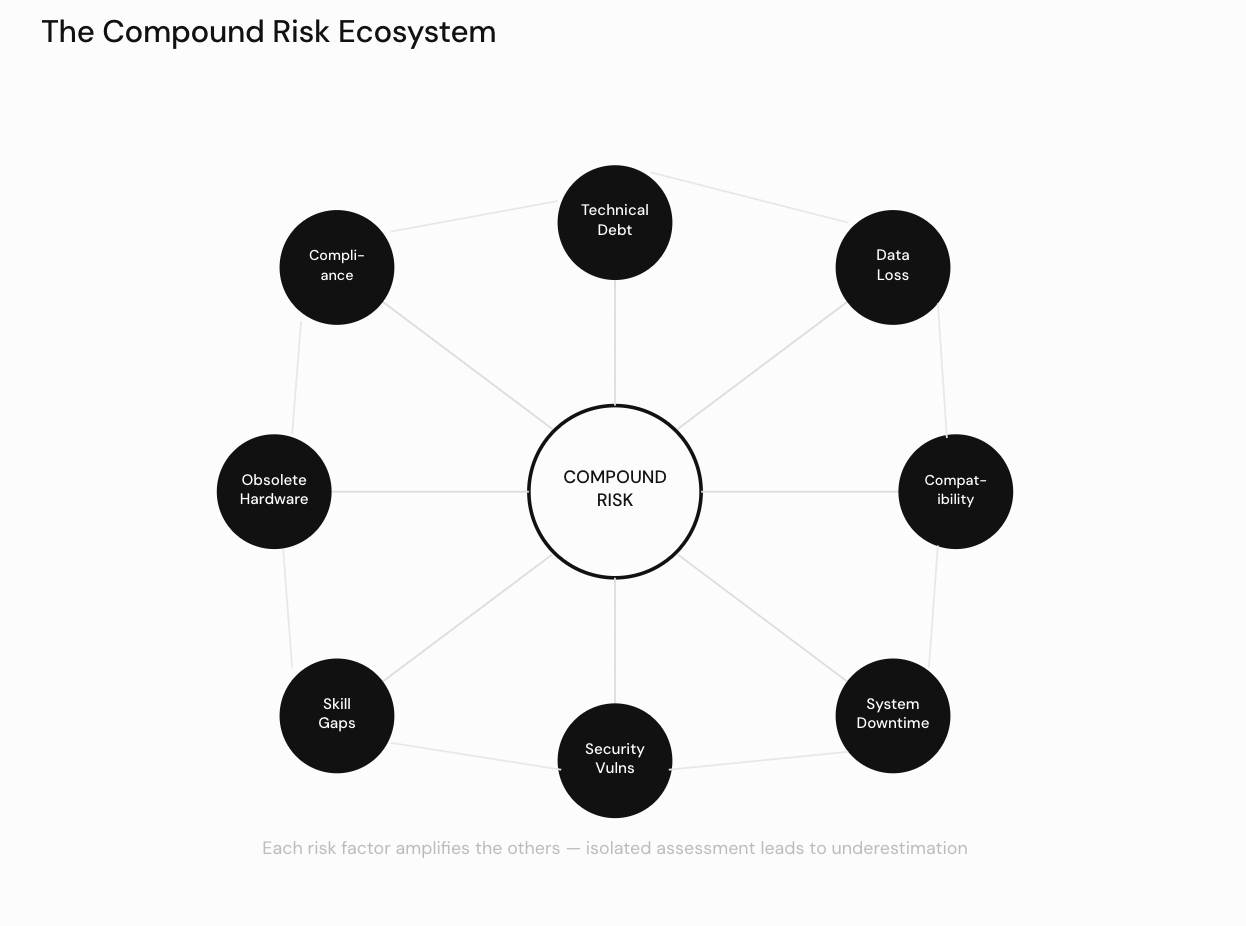

Legacy modernization is not without substantial risk, and the history of enterprise technology is littered with modernization programs that exceeded budgets, missed timelines, or failed to deliver promised capabilities. Understanding these risks is essential for any financial advisory engagement where modernization is a component of the value creation thesis. From due diligence assessments to post-acquisition integration plans, the ability to identify, quantify, and mitigate these challenges directly determines whether a modernization investment creates or destroys value. The risk landscape spans technical debt buried in aging codebases and undocumented APIs, data loss during migration from fragmented data silos, compatibility issues and integration challenges that arise during parallel operations, system downtime risks that threaten revenue and reputation, security vulnerabilities exposed by outdated platforms, compliance updates that shift regulatory goalposts mid-project, skill gaps that constrain execution capacity, obsolete hardware that creates hidden dependencies, and the costly process changes required to keep the business running while transformation unfolds. Each of these risks interacts with the others, creating a compound challenge that demands coordinated management.

Technical Debt and Undocumented Dependencies

Technical debt represents the most pervasive challenge in any legacy modernization initiative. Years of incremental patches, workarounds, and undocumented customizations create systems whose actual behavior diverges significantly from their documented specifications—assuming documentation exists at all. Technical debt accumulates silently: each shortcut taken under deadline pressure, each temporary fix that became permanent, each feature grafted onto an architecture never designed to support it adds another layer of hidden complexity. The compounding nature of technical debt means that the cost of addressing it grows over time—the longer an organization defers modernization, the more expensive and risky it becomes.

Undocumented APIs are a particularly treacherous form of technical debt. These are integration points that downstream systems, partner platforms, and internal workflows depend upon, yet whose contracts, behaviors, error handling, and data formats are known only through institutional memory that may have already left the organization. When modernization teams encounter undocumented APIs, they face a difficult choice: reverse-engineer the existing behavior—a time-consuming and error-prone process—or risk breaking unknown consumers. In large enterprises, a single undocumented API may be called by dozens of systems, any of which could fail silently if the API’s behavior changes during modernization.

Data Integrity and Fragmentation

Data loss during migration remains one of the most feared outcomes, and for good reason. Even partial data loss can invalidate financial records, break regulatory audit trails, and destroy customer trust. The risk of data loss during migration is amplified by the fact that legacy systems often store data in proprietary formats, use implicit encoding conventions, and maintain relationships between records through application logic rather than database constraints. When this data is extracted and transformed for loading into modern systems, subtle errors—character encoding mismatches, truncated fields, timezone misalignment, lost null semantics—can corrupt records in ways that may not be detected for weeks or months.

Fragmented data silos compound this challenge. In organizations that have grown through acquisition or departmental autonomy, the same business entities—customers, products, transactions—may be represented differently across multiple legacy systems, each maintaining its own version of the truth. Fragmented data silos create reconciliation nightmares during migration: which system holds the authoritative customer record? How should conflicting data be merged? What business rules govern deduplication? These questions must be answered definitively before migration begins, and the process of answering them frequently reveals data quality issues that the organization had never acknowledged.

Compatibility and Integration Failures

Compatibility issues arise when modernized components must coexist with legacy elements during transitional periods—which, in practice, means during the majority of the modernization timeline. Protocol mismatches, data format incompatibilities, authentication mechanism differences, and divergent error handling conventions all create compatibility issues that can derail integration timelines. A modernized service that expects JSON payloads cannot seamlessly communicate with a legacy system that produces fixed-width flat files without an intermediary translation layer, and each such layer adds complexity, latency, and potential failure points.

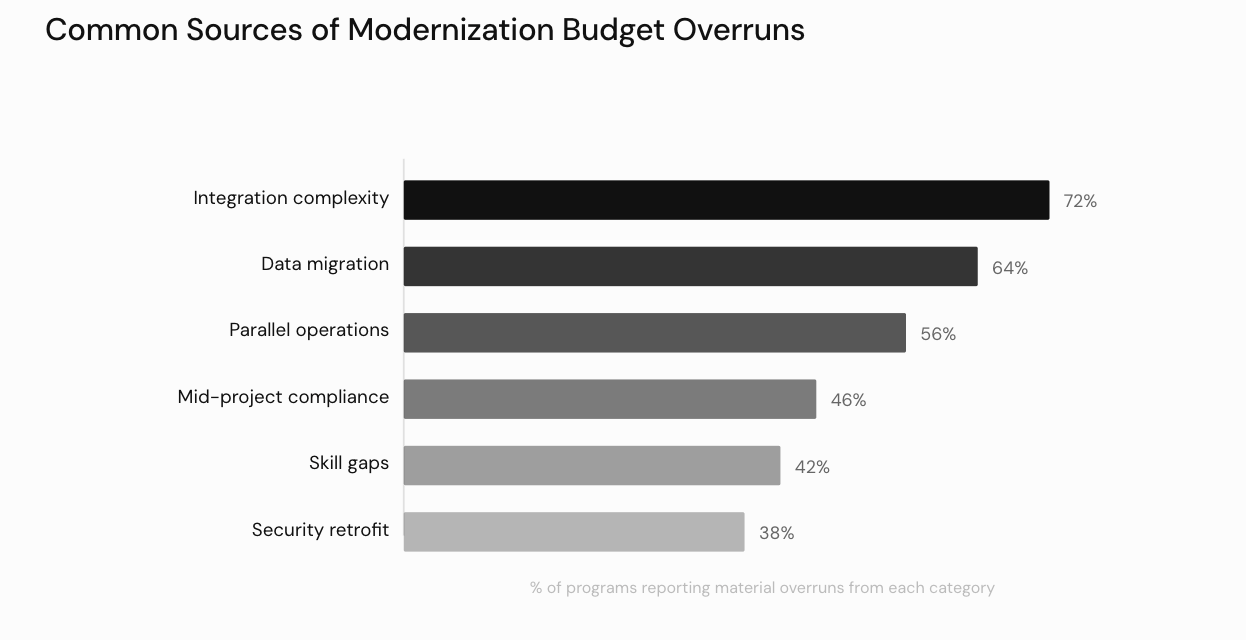

Integration challenges multiply when the organization’s technology ecosystem includes third-party systems, partner connections, regulatory reporting interfaces, and customer-facing applications that cannot tolerate disruption. Every new integration point introduces a potential failure mode, and the compound risk of multiple simultaneous integration changes can overwhelm even well-prepared teams. From a financial advisory perspective, integration challenges are among the most common sources of budget overruns in modernization programs—organizations consistently underestimate the number of integration points, the complexity of maintaining backward compatibility, and the testing effort required to validate that all connections function correctly after changes. For a structured view of how these cost overruns compound across a project, our breakdown of custom software development pricing provides a useful reference framework.

Integration complexity and data migration account for the majority of budget overruns — each amplifying the other when not managed as a coordinated risk workstream.

Operational Continuity and Downtime

System downtime risks during cutover events can translate directly into revenue loss, contractual penalties, and reputational damage. For organizations operating in sectors with strict service-level agreements—financial services, healthcare, e-commerce, telecommunications—even brief periods of system downtime carry severe financial consequences. The risk of system downtime extends beyond planned cutover windows: unexpected failures during migration, data synchronization errors between old and new systems, and performance degradation under load can all produce unplanned outages that disrupt business operations. System downtime risks are compounded by integration challenges, as failures in newly connected systems can cascade through the broader ecosystem. Similarly, compatibility issues between old and new components frequently surface only under production load, turning what appeared to be a low-risk cutover into an extended outage.

The business must continue to operate, serve customers, and meet regulatory obligations without interruption throughout the modernization process—a requirement that introduces costly process changes as parallel systems must be maintained during the transition. Running dual environments, maintaining synchronization between old and new data stores, training staff on interim workflows, and operating enhanced monitoring and rollback capabilities all represent costly process changes that add materially to the overall modernization budget. These costly process changes are exacerbated when fragmented data silos require parallel data reconciliation processes, and when undocumented APIs force teams to build and maintain translation layers between old and new system interfaces. The transitional operating costs associated with these process changes are frequently excluded from initial estimates, creating a gap between projected and actual expenditure that erodes stakeholder confidence.

Security Exposure and Compliance Pressure

Security vulnerabilities present a dual concern that demands attention at every stage of the modernization lifecycle. Legacy systems often lack modern authentication mechanisms, encryption protocols, intrusion detection capabilities, and audit logging—making them attractive targets for increasingly sophisticated threat actors. Known security vulnerabilities in legacy platforms may remain unpatched because vendor support has ended, because patches would break dependent functionality, or because the organization lacks engineers with the skills to apply them safely. At the same time, the modernization process itself can introduce new security vulnerabilities if security is treated as an afterthought rather than an architectural principle. New APIs, cloud-based services, and microservices interfaces all expand the organization’s attack surface, and each must be secured against both external threats and internal misuse.

Compliance updates add another layer of complexity. Regulatory requirements—data privacy mandates, industry-specific reporting obligations, cross-border data transfer restrictions—evolve independently of modernization timelines. An organization that begins a multi-year modernization program may find that compliance updates enacted midway through the project require architectural changes to the target state, necessitating redesign work that delays delivery and increases costs. The intersection of compliance updates and modernization creates a particularly challenging planning environment, as organizations must build sufficient flexibility into their modernization architectures to accommodate regulatory changes that cannot be predicted at the outset.

The interplay between security vulnerabilities, compliance updates, and technical debt creates a compounding risk profile. Technical debt in security-critical components—hardcoded credentials, unpatched libraries, deprecated encryption algorithms—simultaneously increases vulnerability exposure and complicates compliance with evolving regulatory standards. Addressing these interrelated risks requires a unified approach that treats security, compliance, and technical debt remediation as interconnected workstreams rather than independent concerns. Organizations that manage these risks in isolation frequently discover that fixing one issue inadvertently worsens another—for example, patching a security vulnerability may break undocumented APIs that obsolete hardware monitoring systems depend upon, triggering system downtime risks that the organization is unprepared to manage.

Skill Gaps and Talent Scarcity

Skill gaps represent one of the most underestimated risks in legacy modernization. The engineers who understand legacy systems deeply—their undocumented behaviors, their implicit business rules, their failure modes—frequently lack experience with modern cloud platforms, container orchestration, CI/CD pipelines, and microservices design patterns. Conversely, the modern technologists brought in to lead the transformation may underestimate the complexity embedded in legacy codebases and make architectural decisions that fail to account for critical edge cases. Bridging this skill gap requires dedicated investment in cross-training programs, structured knowledge transfer sessions, and often the creation of hybrid teams that pair legacy experts with modern specialists. In a competitive talent market, the scarcity of engineers who possess both legacy and modern skills can become a binding constraint on modernization velocity, directly impacting project timelines and costs. For organizations evaluating how to structure and cost these teams, our guide on hiring software developers covers the key considerations for building capable engineering teams.

Hardware Dependencies and Physical Infrastructure

Obsolete hardware dependencies can create unexpected constraints when legacy software is tightly coupled to specific physical infrastructure—proprietary processors, specialized I/O controllers, legacy storage arrays, or hardware security modules—that cannot be virtualized or emulated without behavioral changes. Replacing or decommissioning obsolete hardware frequently triggers a chain reaction of software adjustments that must be carefully sequenced and tested. In some cases, obsolete hardware components are no longer manufactured, meaning that failures cannot be resolved through replacement and must instead be addressed through emergency migration—often under time pressure and without adequate planning. For acquirers conducting due diligence, the presence of obsolete hardware dependencies in a target company’s technology stack represents a material risk that should be explicitly quantified and reflected in the deal economics.

Managing the Compound Risk Landscape

The challenges described above do not exist in isolation—they form an interconnected risk ecosystem where each factor amplifies the others. Technical debt in legacy codebases makes it harder to address security vulnerabilities, which in turn complicates compliance updates. Undocumented APIs create integration challenges when modernized components must communicate with legacy systems, increasing compatibility issues that lead to system downtime risks during cutover events. Fragmented data silos elevate the probability of data loss during migration, while obsolete hardware constrains the available modernization pathways. Skill gaps slow the pace at which any of these risks can be addressed, and the costly process changes required to maintain business continuity during transformation consume budget that could otherwise be directed toward resolving technical debt or closing security vulnerabilities.

For financial advisors, the critical insight is that modernization risk cannot be assessed term by term. A credible risk evaluation must model the interactions: how does the presence of undocumented APIs affect the probability and cost of integration challenges? How do skill gaps extend timelines, and how do extended timelines increase the likelihood that compliance updates will require mid-project redesign? How does the reliance on obsolete hardware constrain whether a cloud migration or on-premises transformation is feasible? Organizations that build integrated risk models—accounting for the compounding effects of technical debt, fragmented data silos, security vulnerabilities, compatibility issues, and system downtime risks—produce more accurate cost estimates and more realistic implementation plans than those that evaluate each risk category independently. When selecting a development partner capable of navigating this complexity, the criteria outlined in our guide on how to choose a software development company provide a rigorous evaluation framework. The most successful modernization programs establish dedicated risk management workstreams that continuously monitor all risk categories—from data loss during migration to costly process changes—and adjust plans proactively as conditions evolve.

The compound risk ecosystem — each of the eight risk categories connects to every other, meaning isolated mitigation strategies consistently underestimate total exposure.

Cost and ROI Considerations

For financial advisors and their clients, the economic framing of modernization is paramount. Every modernization initiative must ultimately be justified in the language of returns, and the analytical rigor applied to this justification directly determines whether the investment creates or destroys value.

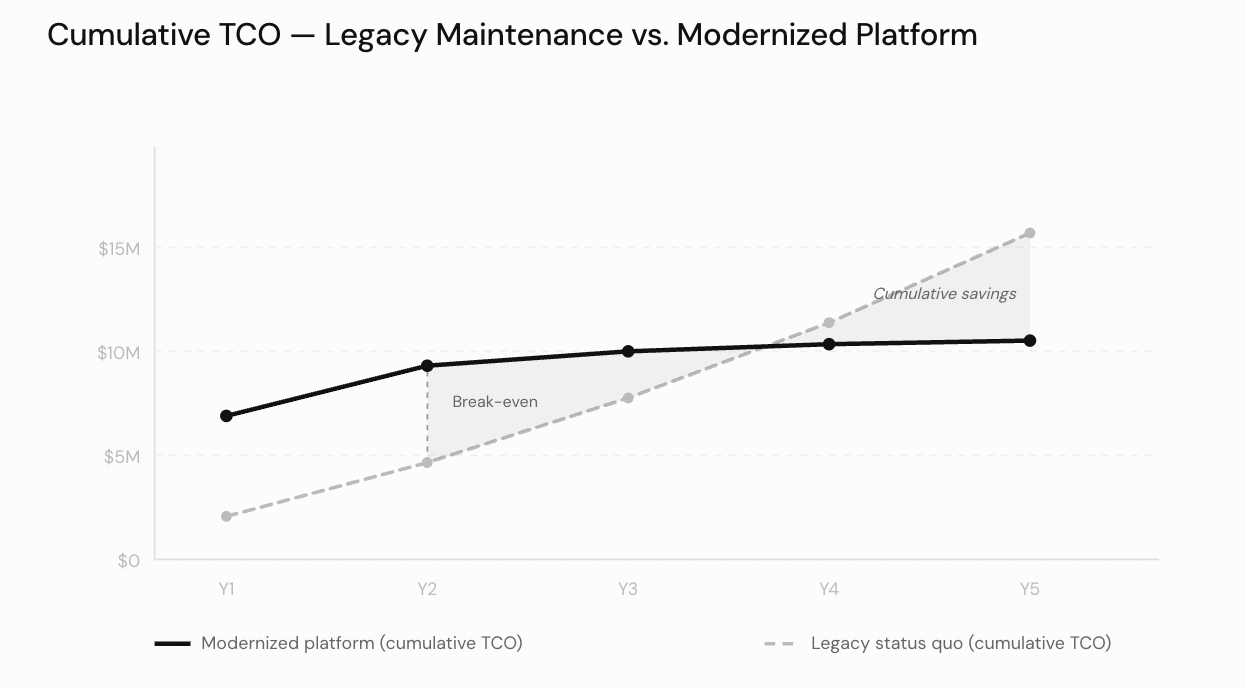

Total Cost of Ownership

The total cost of ownership (TCO) analysis is the foundational exercise for any modernization business case. A credible TCO model must account for one-time costs—including assessment, design, migration execution, testing, training, data conversion, and organizational change management—as well as the ongoing maintenance expenses of the new environment, such as cloud subscription fees, managed service charges, monitoring tooling, and the engineering headcount required to operate and evolve the modernized platform. For a granular view of how engineering labor costs break down across different engagement models, our analysis of software development cost per hour provides useful benchmarks for building headcount assumptions into a TCO model. But a complete TCO analysis must equally account for the costs of inaction: the escalating maintenance burden of legacy systems, the opportunity cost of delayed product launches and market expansions that cannot be pursued while resources are consumed by legacy upkeep, and the risk-adjusted cost of security incidents and compliance failures that become increasingly probable as legacy systems age. The opportunity cost dimension is frequently underweighted in modernization business cases, yet it often represents the single largest economic factor—the revenue that was never earned because the organization could not move fast enough.

Cumulative TCO analysis: modernization carries higher upfront costs but reaches break-even by Year 2, generating compounding savings as legacy maintenance costs continue to escalate.

Budget Planning and Prioritization

Budget constraints are a reality in virtually every modernization engagement, which makes phasing and prioritization essential. Not every system needs to be modernized simultaneously, and not every system warrants the same level of investment. The current state of the application—including its technical health, its strategic importance to the business, its maintenance burden, the severity of its security exposure, and the availability of alternative solutions—should drive prioritization decisions. A robust modernization strategy aligns the sequencing of modernization efforts with business goals, ensuring that the highest-value, highest-risk systems receive attention first. This alignment between modernization strategy and business goals is critical: modernization programs that are disconnected from strategic priorities struggle to maintain executive sponsorship and organizational momentum, often stalling or being cancelled before they deliver meaningful value. Budget planning should also account for contingency reserves, as modernization projects frequently encounter unforeseen complexity that requires additional investment.

Measuring Return on Investment

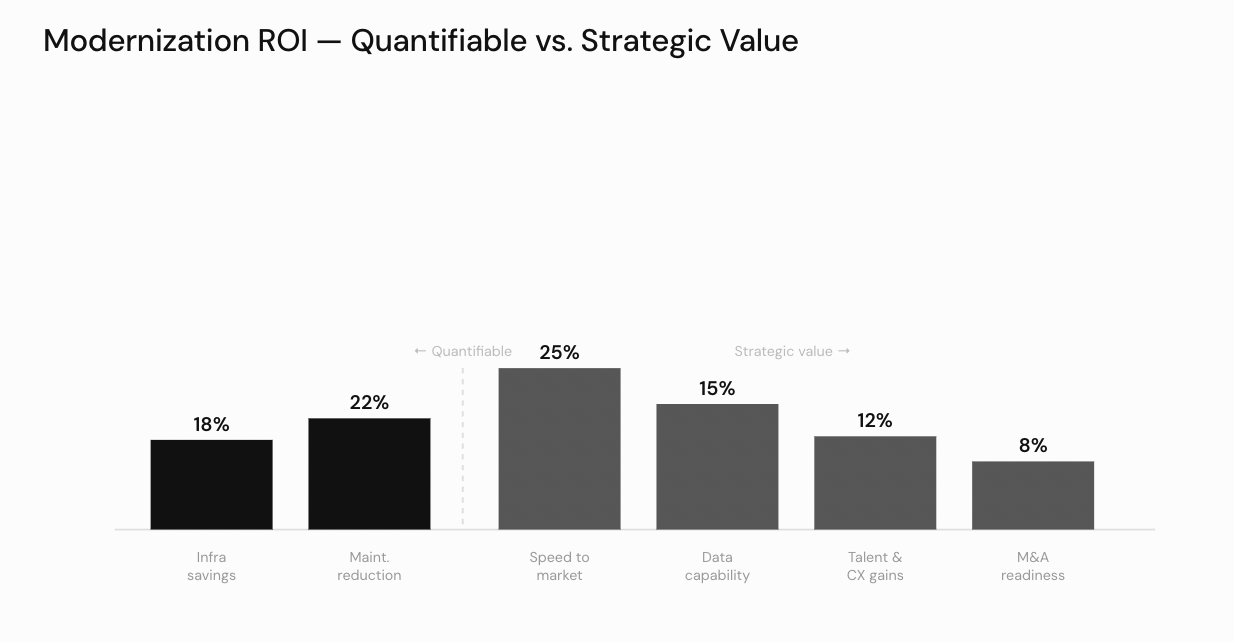

Return on investment (ROI) calculations for modernization must extend beyond simple cost reduction. While reduced infrastructure spend and lower ongoing maintenance expenses are the most easily quantifiable benefits, the strategic value of modernization—faster innovation cycles, improved customer experience, enhanced data capabilities, reduced integration costs for future acquisitions, and improved talent attraction—often represents the larger share of economic benefit. Quantifying these strategic benefits requires more sophisticated modeling, including scenario analysis, market benchmarking, and explicit assumptions about competitive dynamics. Risk tolerance varies across organizations, and the ROI framework must incorporate risk-adjusted scenarios that account for the probability and impact of modernization failures, delays, and scope changes. Organizations with low risk tolerance may prefer conservative strategies that deliver smaller but more certain returns, while organizations with higher risk tolerance may pursue more ambitious transformations with greater upside potential.

Modernization ROI decomposed: speed-to-market gains represent the largest single benefit category, while strategic value components collectively outweigh hard cost savings.

Long-Term Cost Structures and Architecture Decisions

Long-term costs deserve particular scrutiny because the decisions made during modernization lock in cost structures for years or even decades. Cloud migration can reduce infrastructure costs initially, but without disciplined cost management practices—including reserved capacity planning, resource right-sizing, and automated cost governance—cloud spending can escalate rapidly as usage grows and teams provision resources without adequate oversight. The system architecture chosen during modernization is among the most consequential financial decisions in the entire initiative: an architecture that appears economical at launch may prove expensive at scale, and vice versa. Microservices architectures, for example, can deliver significant long-term savings through independent scaling and targeted optimization, but they introduce operational complexity and tooling costs that must be factored into the long-term cost model. Adopting iterative delivery practices—such as those described in our guide on what is a sprint in software development—allows modernization programs to surface architectural cost signals early and course-correct before expensive structural decisions become permanent. Modeling multiple growth scenarios during the planning phase, and stress-testing architectural choices against each scenario, is essential for ensuring that the modernized system architecture remains economically sustainable as the business evolves.

Drivers and Benefits of Modernization

The impetus for modernization rarely originates from a single catalyst. Instead, it tends to emerge from the convergence of multiple pressures—competitive dynamics, regulatory evolution, talent constraints, and the accelerating expectations of digitally fluent customers and counterparties.

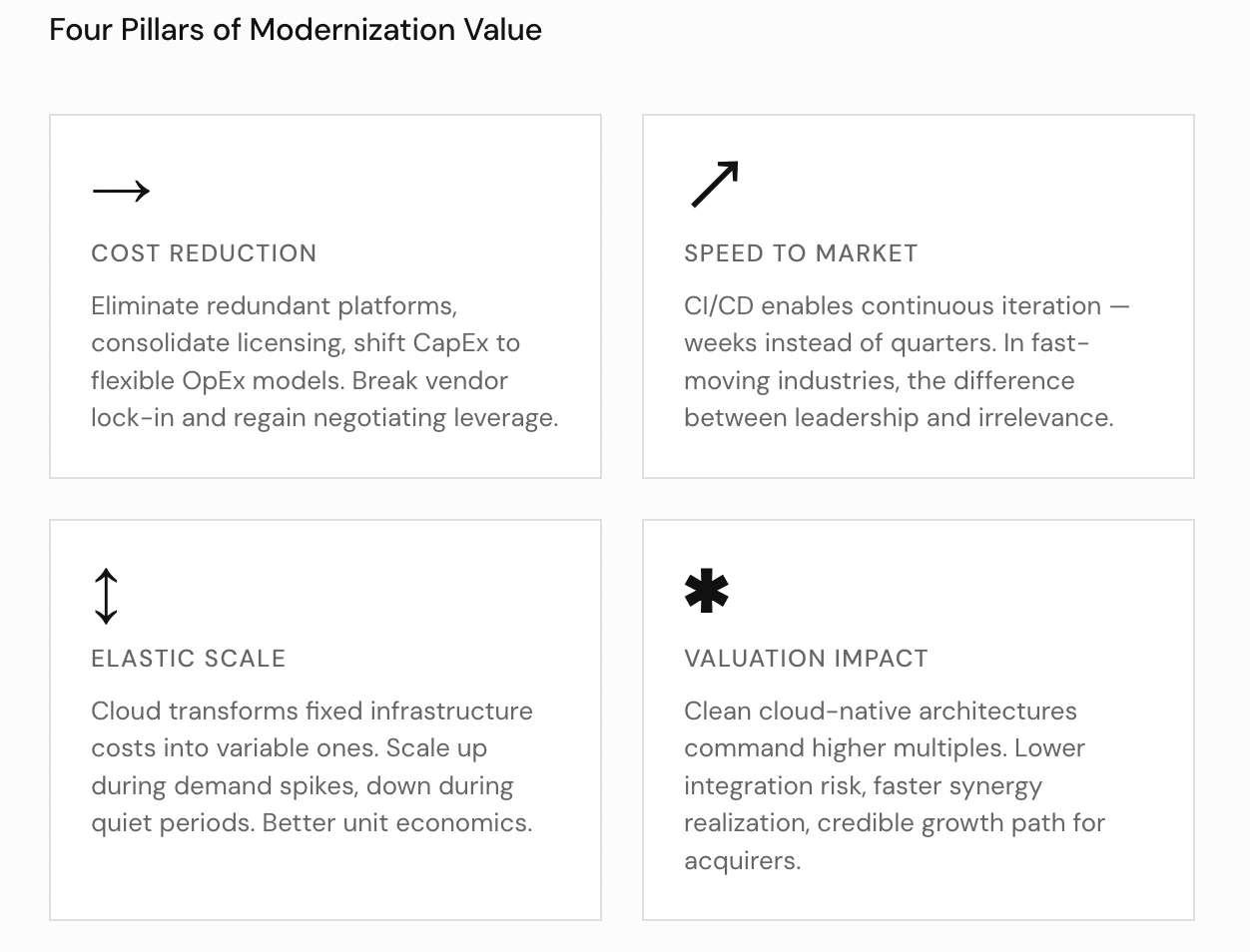

The four pillars of modernization value — cost reduction, speed to market, elastic scale, and valuation impact — each operates independently and reinforces the others.

Reducing Costs and Eliminating Lock-In

From a financial advisory perspective, one of the primary defensive drivers is the need to address escalating operational costs and reduce vendor lock-in that limits negotiating leverage. Legacy environments often depend on proprietary platforms with punitive licensing models and limited competitive alternatives. Modernization breaks these constraints, giving organizations the freedom to choose best-of-breed solutions and negotiate from a position of strength. Infrastructure modernization and database modernization initiatives, when executed thoughtfully, reduce costs by eliminating redundant platforms, consolidating licensing obligations, and shifting from capital expenditure to more flexible consumption-based models. Organizations that have grown dependent on legacy data tools such as spreadsheets face similar lock-in dynamics; our guide on how to migrate from Excel to custom software illustrates the planning principles that apply across the modernization spectrum.

Accelerating Speed to Market

On the offensive side, modernization unlocks capabilities that directly enhance enterprise value. CI/CD practices enable continuous iteration rather than costly, infrequent release cycles, dramatically accelerating time-to-market for new products and features. In industries where the window of competitive advantage is measured in weeks rather than quarters, this acceleration can be the difference between market leadership and irrelevance.

Cloud Enablement and Global Reach

Cloud-based legacy modernization provides future-proof deployment architectures that adapt to demand fluctuations without the capital expenditure associated with on-premises capacity planning. Organizations gain global availability through distributed infrastructure and content delivery networks, enabling them to serve customers worldwide with consistent performance and reliability. The ability to scale elastically—up during demand spikes, down during quiet periods—transforms infrastructure from a fixed cost into a variable one, fundamentally improving unit economics.

Custom Modernization and Strategic Advantage

Custom software modernization—tailoring solutions to an organization’s specific workflows rather than conforming to generic vendor offerings—creates defensible competitive advantages that are difficult for rivals to replicate. Understanding the full range of capabilities a development partner can deliver is essential before committing to a modernization approach; our overview of what a software development company does provides a useful reference for aligning expectations with delivery capacity. Perhaps most importantly for deal economics, a modernized technology stack fundamentally changes how potential acquirers and investors perceive a business. Companies with clean, well-documented, cloud-native architectures command higher multiples because they present lower integration risk, faster synergy realization, and a more credible path to growth. Technical debt, by contrast, functions as a hidden discount on enterprise value—one that sophisticated buyers will quantify and deduct.

Implementation Steps and Best Practices

Successful modernization follows a disciplined sequence of activities, and the organizations that achieve the best outcomes are those that invest adequately in the early assessment and planning phases rather than rushing to implementation. The implementation lifecycle begins with application assessment to identify every modernization candidate, proceeds through feasibility analysis and cloud migration architecture decisions, establishes the enabling infrastructure of containerization, CI/CD tools, and DevOps pipelines, executes data migration with rigorous validation, adopts an incremental modernization approach to reduce risk, and weaves security measures throughout every phase. Whether pursuing a hybrid cloud approach or a full cloud-native approach, the sequencing and discipline of these steps determines whether modernization delivers its promised value or devolves into an expensive, disruptive exercise in frustration.

Application Assessment and Candidate Identification

The journey begins with a comprehensive application assessment—a systematic inventory and evaluation of every system in the portfolio, documenting its business criticality, technical condition, integration dependencies, data sensitivity, security posture, and modernization complexity. A thorough application assessment examines not only the technology stack of each system but also its organizational context: who depends on it, how it is supported, what institutional knowledge is required to operate it, and what would happen if it became unavailable. The application assessment should produce a clear ranking of every system in the portfolio, distinguishing between systems that are strategic assets, systems that are operational necessities, and systems that are candidates for retirement.

This ranking directly informs the identification of each modernization candidate—the systems that warrant active investment in transformation. Not every legacy system is a modernization candidate: some may be better served by replacement with commercial alternatives, others may be approaching end-of-life and can simply be decommissioned, and still others may be functioning adequately and can be left in place while higher-priority modernization candidates receive attention. The discipline of explicitly identifying and prioritizing modernization candidates prevents organizations from spreading resources too thinly across too many simultaneous initiatives. For each modernization candidate, the application assessment should document the recommended approach—whether cloud migration, containerization, refactoring, or replacement—and the expected dependencies on other infrastructure changes such as data migration and DevOps pipelines implementation.

Feasibility Analysis and Risk Evaluation

A rigorous feasibility analysis accompanies the application assessment, validating that the proposed modernization approach for each candidate is technically sound, economically justified, and organizationally achievable. The feasibility analysis should evaluate multiple modernization strategies for each modernization candidate—rehosting, replatforming, refactoring, re-architecting, rebuilding, or replacing—and compare them across dimensions including cost, risk, timeline, skill requirements, and expected business impact. A feasibility analysis that considers only one approach is not a feasibility analysis at all; it is a rationalization of a decision already made.

The feasibility analysis should also explicitly address organizational readiness: does the team have the skills required for containerization and cloud-native approach implementation? Are the necessary CI/CD tools and DevOps pipelines in place or budgeted? Is executive sponsorship strong enough to sustain a multi-quarter effort? Are there competing priorities that could divert resources midstream? For modernization candidates requiring cloud migration, the feasibility analysis must evaluate whether a hybrid cloud approach or full public cloud migration is more appropriate given the organization’s data sovereignty and compliance requirements. Organizations that skip or abbreviate the feasibility analysis phase consistently encounter surprises during implementation—from unexpected data migration complexity to insufficient security measures—that could have been anticipated and mitigated with more thorough upfront analysis.

Cloud Migration Planning and Architecture

Cloud migration planning requires careful attention to the target environment’s capabilities, constraints, pricing models, and operational requirements. A cloud migration is not simply a change of hosting location—it is a fundamental shift in how infrastructure is provisioned, managed, secured, and paid for. Organizations must decide between public cloud, private cloud, and hybrid cloud approach configurations based on their specific requirements for data sovereignty, latency, regulatory compliance, and cost optimization.

A hybrid cloud approach—maintaining some workloads on-premises or in private cloud while migrating others to public cloud—is often the most practical path for organizations with complex regulatory requirements or legacy systems that cannot be immediately migrated. The hybrid cloud approach allows organizations to capture cloud benefits for suitable workloads while preserving existing infrastructure investments for systems that require more time or architectural work before they can be moved.

A cloud-native approach—designing applications specifically for cloud environments rather than simply relocating them—delivers the greatest long-term benefits but demands deeper architectural investment and a willingness to rethink established patterns. Cloud-native applications leverage managed services, auto-scaling, distributed storage, and event-driven architectures to achieve levels of resilience, scalability, and cost efficiency that are difficult to replicate with traditional deployment models. The cloud-native approach also integrates naturally with containerization, CI/CD tools, and DevOps pipelines, creating a cohesive operational model where infrastructure, application code, and deployment automation are managed as a unified whole. The choice between a lift-and-shift cloud migration and a cloud-native approach should be made deliberately for each modernization candidate, based on the system’s strategic importance, the results of the feasibility analysis, and the organization’s willingness to invest in deeper transformation. Many organizations adopt an incremental modernization strategy, starting with a basic cloud migration and progressively evolving toward a cloud-native approach as their teams build experience and confidence with cloud platforms.

Containerization and Orchestration

Containerization has become a standard enabler of modernization, packaging applications and their dependencies into portable, isolated units that run consistently across development, testing, staging, and production environments. By eliminating the “works on my machine” problem that plagues traditional deployments, containerization dramatically reduces the friction of moving applications between environments and accelerates the path from code commit to production deployment. Containerization also provides a natural boundary for modernization: legacy applications can be containerized as a first step, stabilizing their deployment model before deeper architectural changes are undertaken.

Container orchestration platforms—most notably Kubernetes—extend the benefits of containerization by automating deployment, scaling, health monitoring, and recovery across clusters of containers. For organizations pursuing microservices architectures, containerization and orchestration together provide the infrastructure foundation that makes independently deployable services operationally viable at scale.

CI/CD Tools and DevOps Pipelines

Combined with containerization, CI/CD tools and DevOps pipelines enable the rapid, reliable, and repeatable deployment cycles that modern business demands. CI/CD tools automate the process of building, testing, and packaging application code each time a developer commits a change, ensuring that defects are caught early and that the codebase remains in a consistently deployable state. DevOps pipelines extend this automation through to production deployment, incorporating automated security scanning, compliance verification, performance testing, and staged rollout strategies that minimize the risk of production incidents.

The integration of CI/CD tools and DevOps pipelines into the modernization process is not merely a technical improvement—it is a cultural and organizational transformation. Teams accustomed to infrequent, manually coordinated release cycles must adopt new workflows, new responsibilities, and new metrics. DevOps pipelines also create powerful feedback loops: automated monitoring detects issues in production within minutes, automated rollback capabilities limit the blast radius of failures, and deployment telemetry provides data that continuously improves the reliability and velocity of future releases. Understanding how iterative delivery practices underpin this transformation is essential; our guide on what is a sprint in software development explains the sprint-based cadence that drives disciplined, incremental delivery. Organizations that invest in mature CI/CD tools and DevOps pipelines during modernization build a capability that delivers compounding returns for years afterward.

Data Migration Strategy and Execution

Data migration warrants its own dedicated workstream with independent planning, testing, validation, and rollback protocols. The complexity of transforming data from legacy schemas to modern structures, while maintaining referential integrity, business meaning, and regulatory compliance, is consistently underestimated—often by a factor of two or more. Data migration must address not only the structure and content of data but also its lineage, access controls, retention policies, and the business rules that govern its interpretation.

Effective data migration begins with comprehensive data profiling—understanding what data exists, where it resides, how it relates to other data, and what quality issues are present. This profiling informs the design of extract-transform-load processes that must handle edge cases, exceptions, and data quality anomalies without losing information or introducing corruption. Validation protocols should compare source and target data sets at multiple levels—record counts, aggregate checksums, sample-based detailed comparison—to ensure that the migration has been executed correctly. Organizations that treat data migration as a secondary concern within a broader cloud migration frequently discover, too late, that their modernized applications are operating on incomplete or inaccurate data.

Incremental Modernization and Phased Delivery

Incremental modernization—migrating data and functionality in progressive stages rather than in a single massive cutover—reduces risk significantly, though it introduces complexity in managing the coexistence of old and new systems during transitional periods. Each increment of an incremental modernization program should deliver measurable business value: a completed data migration for a critical dataset, a modernization candidate fully transitioned to a cloud-native approach, a legacy dependency retired and replaced by a modern containerization-ready service. This rhythm of tangible progress sustains organizational support for the initiative and provides regular opportunities to validate assumptions, adjust plans, and incorporate lessons learned.

Incremental modernization also enables organizations to manage cash flow more effectively, spreading investment across multiple budget cycles rather than requiring a single large capital commitment. The approach works particularly well when combined with mature CI/CD tools and DevOps pipelines that allow each increment to be deployed, tested, and rolled back independently. From a financial advisory perspective, incremental modernization aligns well with the phased value creation plans that private equity sponsors and corporate development teams typically prefer, as it allows modernization milestones to be linked to specific business outcomes and financial metrics. Each phase of the incremental modernization journey should build on the capabilities established in previous phases—early investments in containerization and cloud migration infrastructure pay dividends in later phases when more complex application assessment findings are addressed.

Security Measures Throughout the Lifecycle

Security measures must be woven throughout every phase of the modernization lifecycle, not treated as a final checkpoint before go-live. Every modernization decision—from API design to data storage architecture to identity and access management—has security implications that must be evaluated in context. Effective security measures during modernization include threat modeling for the target architecture, automated security scanning integrated into CI/CD tools and DevOps pipelines, encryption of data in transit and at rest, implementation of least-privilege access controls, and comprehensive audit logging that supports both operational monitoring and regulatory compliance.

Adopting a security-by-design mindset ensures that the modernized environment is not only more capable than its predecessor but also more resilient against the increasingly sophisticated threat landscape that modern enterprises face. Security measures should be validated through penetration testing, vulnerability assessments, and tabletop exercises that simulate real-world attack scenarios against the modernized platform. Organizations that defer security measures to late stages of modernization frequently discover that retrofitting security into an already-built system is far more expensive and disruptive than incorporating it from the outset.

Integrating the Implementation Lifecycle

The implementation steps described above are not independent activities—they form an interconnected lifecycle where the quality of each phase determines the success of those that follow. A thorough application assessment produces the data that makes feasibility analysis meaningful, and feasibility analysis identifies which modernization candidates should pursue cloud migration, which require containerization as a first step, and which demand a full cloud-native approach. The cloud migration architecture—whether hybrid cloud approach or full public cloud—shapes the containerization strategy, which in turn determines the requirements for CI/CD tools and DevOps pipelines. Data migration planning must be coordinated with incremental modernization phasing to ensure that data is available when and where the modernized applications need it. Security measures must be embedded in the CI/CD tools and DevOps pipelines from the beginning, not added after the deployment infrastructure is established.

Organizations that treat these steps as a checklist of independent tasks—completing application assessment before thinking about containerization, establishing DevOps pipelines without considering data migration dependencies, or deferring security measures until after cloud migration is complete—consistently encounter integration failures, rework, and delays. The most effective modernization programs establish a unified program management office that coordinates across all workstreams, ensuring that each modernization candidate progresses through the lifecycle in a coherent sequence. The feasibility analysis should establish this sequencing for each candidate, and the incremental modernization plan should define how the organization builds capability progressively—starting with containerization and cloud migration infrastructure for the first wave of candidates, extending CI/CD tools and DevOps pipelines as the team matures, and scaling data migration and security measures capabilities as the program expands to encompass additional modernization candidates. This integrated approach—grounded in rigorous application assessment, validated through feasibility analysis, and executed through disciplined incremental modernization—is what distinguishes successful modernization programs from the costly failures that litter the industry landscape.

Industry Use Cases and Services

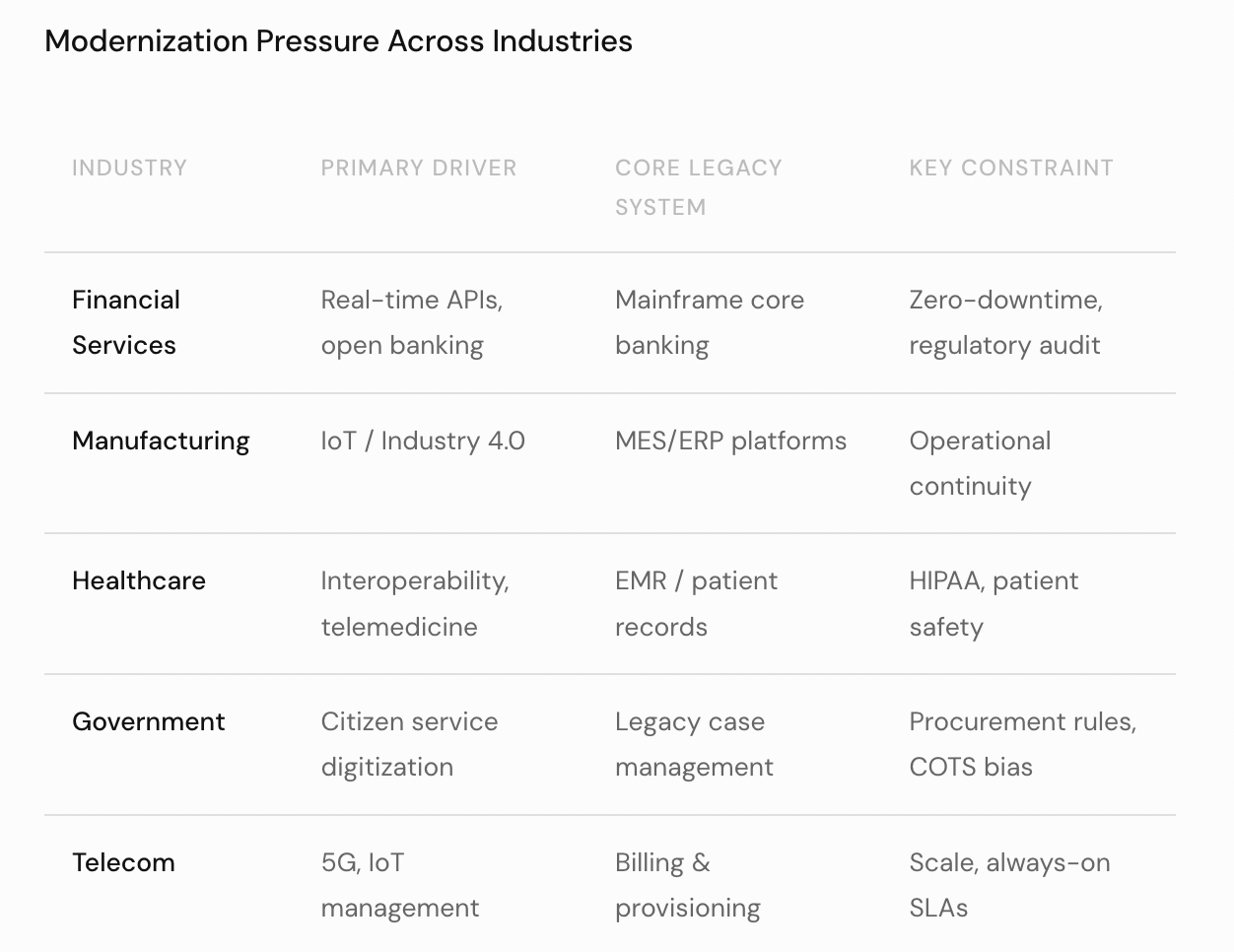

Legacy modernization is not an abstract concern confined to the technology sector. It is a pressing operational and strategic imperative across virtually every industry vertical, and the specific drivers and constraints vary significantly by sector. Understanding these industry-specific dynamics is essential for financial advisors evaluating modernization investments, as the risk profile, regulatory landscape, and expected return on modernization differ substantially across verticals. Across all industries, the common patterns include monolithic applications that resist change, core banking systems and MES/ERP platforms that anchor mission-critical operations, regulatory compliance and data protection mandates that constrain migration options, and the need for application programming interfaces (APIs) that enable integration with modern ecosystems. Professional services—from legacy system modernization assessment to managed database service offerings, microservices adoption programs, cloud migration execution, and commercial off-the-shelf product implementation—provide the external capabilities that most organizations need to supplement their internal teams.

Financial Services and Core Banking

Financial services institutions face perhaps the most acute modernization pressure. Core banking systems, many of which were built on mainframe architectures in the 1970s and 1980s, must now support real-time transaction processing, open banking application programming interfaces (APIs), and increasingly stringent regulatory compliance requirements including data protection mandates. Monolithic applications that once managed deposits, loans, and payments in batch cycles are fundamentally incompatible with the instant, API-driven world that both regulators and customers now expect.

The modernization of core banking systems is particularly complex because these platforms sit at the heart of every financial transaction the institution processes. A failure in a core banking system during modernization can halt payments, freeze accounts, and trigger regulatory intervention. As a result, financial institutions often begin with a legacy system modernization assessment that maps every dependency, integration, and data flow before any code is changed. Many banks are pursuing microservices adoption to decompose their monolithic applications into independently deployable services—for example, separating payment processing, account management, and fraud detection into distinct microservices that can be modernized and scaled independently. Regulatory compliance requirements, including data protection regulations such as GDPR and PCI DSS, impose strict constraints on how data protection is maintained throughout the cloud migration process, requiring encryption, access controls, and audit trails at every stage.

The consequences of failure—whether through system outages, security breaches, or regulatory compliance violations—are severe and immediate, potentially including enforcement actions, loss of banking licenses, and irreparable reputational harm.

Manufacturing and Industry 4.0

Manufacturing and industrial enterprises are navigating the transition to Industry 4.0, where MES/ERP platforms must integrate with IoT sensors, digital twins, and advanced analytics capabilities that legacy systems were never designed to accommodate. The promise of Industry 4.0—predictive maintenance, real-time quality control, automated supply chain optimization—depends entirely on the ability to collect, process, and act on data in real time, a capability that legacy MES/ERP platforms built for batch processing and manual reporting cannot deliver without fundamental transformation.

Production planning, quality management, and supply chain visibility all depend on real-time data flows that monolithic applications and legacy MES/ERP platforms cannot deliver without microservices adoption and modern application programming interfaces (APIs) that expose manufacturing data to analytics platforms, partner systems, and management dashboards. Many manufacturers are adopting managed database service platforms to replace aging on-premises databases that lack the scalability and real-time query performance required for Industry 4.0 use cases. The integration of commercial off-the-shelf product solutions for specific manufacturing functions—warehouse management, quality inspection, predictive maintenance—alongside custom-modernized core systems represents a pragmatic approach that balances speed of deployment with long-term flexibility.

Healthcare, Government, and Telecommunications

Healthcare, government, telecommunications, and energy sectors each face their own variants of the modernization challenge, shaped by distinct regulatory environments, risk profiles, and technology landscapes. Healthcare organizations must balance patient safety and data protection with the demand for interoperable electronic records, telemedicine capabilities, and application programming interfaces (APIs) that enable data sharing between providers, insurers, and patients while maintaining strict regulatory compliance with HIPAA and equivalent mandates. Government agencies face mandates to digitize citizen-facing services while ensuring accessibility, security, and data protection—often constrained by procurement processes that favor commercial off-the-shelf product solutions over custom development.

In telecommunications, monolithic applications that manage network provisioning, billing, and customer service are being decomposed through microservices adoption to support the agility and scalability required for 5G service delivery, IoT device management, and converged communications platforms. Across all of these sectors, the fundamental economic logic is the same: the cost of maintaining legacy systems grows while their ability to support business objectives diminishes. A comprehensive legacy system modernization assessment is the essential first step in each case, providing the fact base that enables informed decision-making about which systems to modernize, in what order, and with what strategy.

Modernization pressure varies by industry — each vertical carries a distinct mix of regulatory, technical, and operational drivers that shapes the feasible strategy set.

Professional Services and Solution Ecosystem

The professional services ecosystem supporting modernization has matured considerably. Organizations can now access a range of offerings from legacy system modernization assessment and strategic roadmapping to managed database service platforms, microservices adoption programs, and fully managed cloud migration services. A thorough legacy system modernization assessment from an experienced provider typically covers application portfolio analysis, dependency mapping, risk evaluation, and strategy recommendation—providing the analytical foundation that financial advisors and executive teams need to make informed investment decisions. When evaluating which development partner to engage for these services, the selection framework in our guide on how to choose a software development company provides a structured approach to assessing capability, risk posture, and cultural fit.

Commercial off-the-shelf product solutions have expanded to cover many functions that previously required custom development, offering pre-built capabilities for CRM, HR, supply chain management, and financial reporting that can be deployed in weeks rather than months. However, the trade-off between standardization and competitive differentiation must be carefully evaluated: organizations that replace custom systems with commercial off-the-shelf product solutions may gain efficiency but lose the unique capabilities that differentiated them in the market. Managed database service offerings from major cloud providers have dramatically reduced the operational burden of database administration, providing automated backup, scaling, patching, and monitoring capabilities that would require dedicated teams to replicate on-premises. Combined with microservices adoption consulting and cloud migration execution services, these managed offerings enable organizations to modernize faster and with less internal engineering investment than was previously possible.

Cross-Industry Patterns and Common Success Factors

Despite the significant differences between industries, several patterns emerge consistently across successful modernization engagements. First, every effective modernization program begins with a comprehensive legacy system modernization assessment that maps the current state—including all monolithic applications, core banking systems or equivalent mission-critical platforms, and their dependencies on application programming interfaces (APIs) both documented and undocumented. Second, regulatory compliance and data protection requirements must be addressed from the outset rather than retrofitted, regardless of whether the organization operates in financial services, healthcare, manufacturing, or government. Third, microservices adoption is increasingly the preferred target architecture across industries, but the pace and scope of adoption must be tailored to each organization’s maturity and risk tolerance.

The role of professional services in enabling modernization cannot be overstated. Organizations that attempt to execute large-scale cloud migration programs, MES/ERP platforms transformations, or core banking systems replacements without experienced external support frequently encounter delays and cost overruns that could have been avoided. A thorough legacy system modernization assessment, combined with access to managed database service platforms, microservices adoption expertise, and commercial off-the-shelf product integration capabilities, provides the foundation that enables internal teams to focus on the business-specific aspects of the transformation. Across every industry—from Industry 4.0 manufacturing to financial services to government—the organizations that invest in rigorous assessment, engage appropriate professional services, and maintain disciplined focus on regulatory compliance and data protection throughout the modernization lifecycle achieve materially better outcomes than those that attempt to manage the transformation entirely in-house.

Modernization Strategies and Approaches

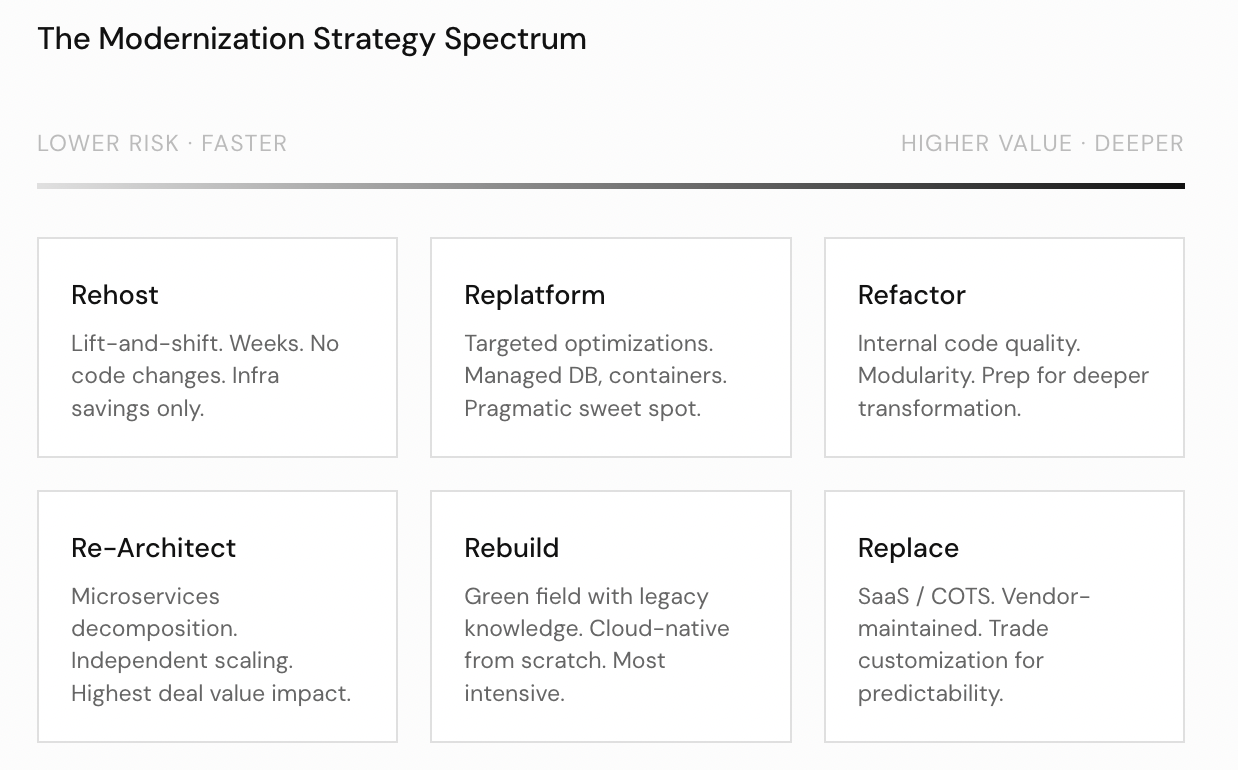

The selection of a modernization strategy is one of the highest-leverage decisions in the entire initiative, and it must be driven by a clear-eyed assessment of business objectives, risk tolerance, timeline constraints, and available capital—not by technology preferences alone. Each strategy sits on a spectrum of transformation intensity, and understanding the trade-offs between them is essential for aligning technology investment with financial outcomes. The spectrum ranges from rehosting—the lightest-touch approach—through replatforming, refactoring, re-architecting, and rebuilding to complete replacement with a software-as-a-service (SaaS) application. Hybrid patterns such as the Strangler Pattern and encapsulation techniques used in mainframe application modernization offer transitional paths that blend multiple strategies. In practice, most enterprise modernization programs employ several strategies simultaneously, applying rehosting to commodity systems, refactoring to high-value applications, re-architecting with microservices to strategic platforms, and rebuilding or replacing to systems beyond remediation. The key is matching each migration pathway to the specific characteristics and strategic importance of the system being modernized.

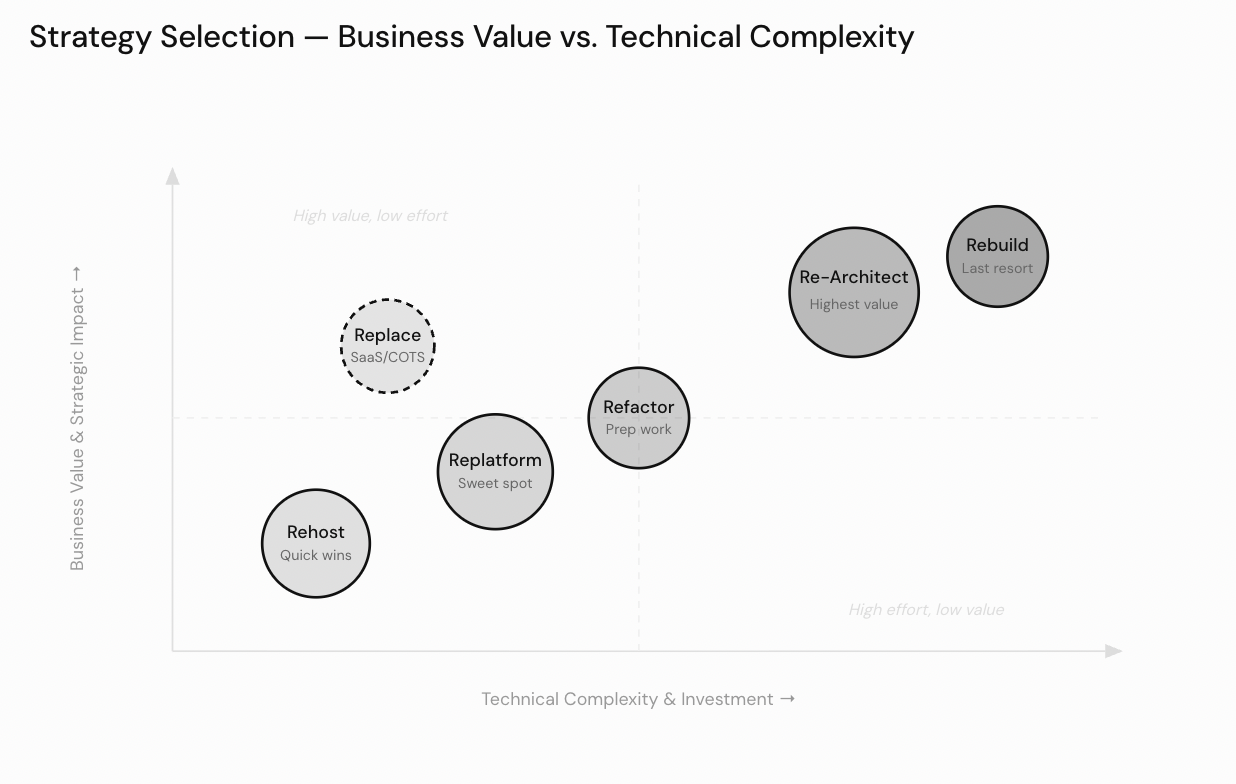

Strategy selection mapped by business value and technical complexity — Re-Architect delivers the highest deal value impact while Rehost provides the fastest path to infrastructure savings.

Rehosting: The Fastest Path to Infrastructure Value

Rehosting, sometimes called “lift and shift,” moves existing applications to modern infrastructure—typically cloud environments—with minimal or no code changes. The primary advantage of rehosting is speed: organizations can complete a rehosting migration in weeks or months rather than years, immediately capturing benefits such as reduced data center costs, improved disaster recovery posture, and access to elastic compute resources. However, rehosting does not address application-level inefficiencies. The legacy codebase, with all its accumulated complexity and technical debt, arrives in the new environment unchanged. For organizations seeking a rapid exit from expiring data center leases or aging hardware contracts, rehosting provides an essential first step—but it should be understood as the beginning of a modernization journey, not its conclusion. Rehosting is particularly effective when paired with a phased roadmap that schedules deeper transformation activities after the initial migration stabilizes.

Replatforming: Targeted Optimization During Migration

Replatforming extends the rehosting concept by making targeted optimizations during the migration process. These optimizations might include switching to managed database services, updating middleware components, adopting container orchestration platforms, or replacing legacy messaging systems with cloud-native equivalents. The defining characteristic of replatforming is that it improves the operational profile of the application without requiring a fundamental redesign of its architecture. Replatforming strikes a pragmatic balance: it delivers more value than a pure lift-and-shift migration while avoiding the extended timelines and elevated risks associated with deeper transformation approaches. For many mid-market companies, replatforming represents the sweet spot where meaningful modernization benefits can be achieved within realistic budget and timeline constraints.

Refactoring: Improving Internal Quality

Refactoring involves restructuring existing code to improve its internal quality—reducing complexity, improving modularity, eliminating redundancy, and extracting common services—while preserving the system’s external behavior. Refactoring is the discipline of making legacy code cleaner, more testable, and more maintainable without changing what it does. In practice, refactoring often targets the highest-friction areas of a codebase: tightly coupled modules that resist change, duplicated business logic spread across multiple components, and convoluted data access patterns that impede performance optimization. The financial case for refactoring rests on its ability to reduce future maintenance costs and accelerate development velocity. A well-refactored codebase enables engineering teams to deliver new features faster, respond to defects more quickly, and onboard new developers with less friction—all of which translate directly into improved operational economics.

Refactoring also serves as a preparatory step for deeper transformation: organizations frequently refactor critical modules to reduce complexity before undertaking a full re-architecting effort that decomposes the system into microservices. This staged approach—refactoring first, then re-architecting—reduces the risk of the migration by ensuring that the code being decomposed is well-understood and well-structured. Software re-engineering efforts that skip the refactoring stage often encounter unexpected complexity that could have been resolved more efficiently before the architectural transformation began.

Re-Architecting and Microservices Decomposition

Re-architecting goes substantially further than refactoring, fundamentally redesigning the application’s structure to adopt modern architectural patterns. The most common target architecture in re-architecting initiatives is microservices—an approach that decomposes a monolithic application into a collection of small, independently deployable services, each responsible for a specific business capability. A microservices architecture enables organizations to scale individual components independently, deploy updates to one service without affecting others, and assign dedicated teams to distinct business domains. The benefits of microservices for deal economics are significant: acquirers evaluating a microservices-based platform see reduced integration risk, clearer boundaries for potential carve-outs, and a technology foundation that supports rapid growth without proportional increases in engineering headcount. For a detailed view of how engineering team costs scale across these engagement models, our breakdown of custom software development pricing provides a useful reference for financial modeling.

However, re-architecting into microservices is not without cost or complexity. It requires careful domain modeling, robust service communication patterns, distributed data management strategies, and sophisticated monitoring and observability tooling. Software re-engineering at this level demands a deep understanding of both the legacy system’s embedded business logic and the target architecture’s operational characteristics. Organizations that underestimate the complexity of re-architecting often find themselves managing a “distributed monolith”—a system that combines the operational overhead of microservices with none of their architectural benefits. Successful re-architecting requires sustained investment in engineering talent, tooling, and organizational alignment over multiple quarters.

The modernization strategy spectrum from lower risk/faster (Rehost) to higher value/deeper (Re-Architect and Rebuild) — most enterprise programs apply multiple strategies simultaneously.

Rebuilding: Starting from a Clean Foundation

Rebuilding means creating entirely new applications from scratch, using modern technologies, languages, and architectural patterns, while preserving the business requirements and domain knowledge embedded in the legacy system. This is the most resource-intensive approach but may be the only viable option when legacy codebases have deteriorated beyond the point where refactoring or re-architecting is feasible—when the technical debt is so severe that software re-engineering the existing system would cost more than starting fresh. Rebuilding offers the opportunity to design systems that are optimized for current and future requirements rather than constrained by historical decisions, and rebuilt applications can be designed from the outset around microservices, serverless computing, and cloud-native patterns.

The key challenge in any rebuilding effort is ensuring that the deep institutional knowledge encoded in the legacy system—often in the form of edge cases, exception handling, and domain-specific business rules—is fully captured and faithfully reproduced in the new implementation. Organizations that treat rebuilding as a straightforward “green field” development project, ignoring the complexity of the system they are replacing, frequently deliver solutions that fail to meet operational requirements. The Strangler Pattern can mitigate this risk by allowing the rebuilt components to be deployed alongside the legacy system and validated in production before the original is decommissioned. Where a complete rebuilding effort is too ambitious, organizations may choose to rebuild only the highest-value modules while pursuing replatforming or rehosting for less critical components—a blended strategy that optimizes the allocation of limited migration resources.

Replacing with SaaS and Commercial Platforms

Replacing involves retiring legacy systems entirely in favor of a software-as-a-service (SaaS) application or other commercial platform that provides equivalent functionality. The appeal of replacing with a SaaS application is clear: organizations gain access to continuously updated, vendor-maintained software without the burden of custom development and ongoing maintenance. SaaS applications also benefit from economies of scale, as vendors spread development costs across their entire customer base. However, the replace strategy introduces dependency on external vendors and may require the organization to adapt its processes to conform to the platform’s design assumptions rather than the reverse. From a financial advisory perspective, replacing legacy systems with SaaS applications can dramatically improve cost predictability through subscription-based pricing models, but organizations must carefully evaluate total long-term costs, including subscription fees, customization expenses, data migration costs, and potential switching costs if the vendor relationship deteriorates.

The Strangler Pattern and Encapsulation

Two hybrid approaches deserve particular attention for organizations operating in environments where risk tolerance is low and continuity is paramount. The Strangler Pattern—named after the strangler fig that gradually envelops its host tree—involves progressively replacing legacy functionality with modern implementations, routing traffic between old and new systems until the legacy system can be fully decommissioned. The Strangler Pattern enables organizations to deliver modernization value incrementally, validating each new component in production before proceeding to the next. This dramatically reduces the risk of a failed “big bang” cutover and allows the business to realize benefits continuously rather than waiting for a single, high-stakes launch event. The Strangler Pattern is compatible with virtually every other modernization strategy: individual components can be rebuilt, re-architected into microservices, replaced with a software-as-a-service (SaaS) application, or subjected to refactoring—all within the same overarching Strangler Pattern framework. This flexibility makes the Strangler Pattern particularly valuable for complex migration programs where different parts of the system warrant different transformation approaches.

Encapsulation wraps legacy systems behind modern API layers, preserving their internal functionality while making them accessible to modern consumers through standardized interfaces. Mainframe application modernization frequently employs encapsulation as an interim measure, exposing mainframe transaction processing capabilities through RESTful APIs or event-driven interfaces while longer-term migration, rebuilding, or software re-engineering strategies are developed. Mainframe application modernization through encapsulation allows organizations to extend the useful life of legacy mainframe systems while simultaneously enabling modern front-end applications, custom web applications, mobile platforms, and partner integrations to interact with mainframe-hosted business logic. For financial institutions and government agencies with deeply embedded mainframe dependencies, mainframe application modernization via encapsulation provides a practical bridge between current operations and future-state architecture, buying time for more comprehensive re-architecting or rebuilding without disrupting ongoing operations.

Serverless Computing and Modern Deployment Models

Serverless computing has emerged as a compelling target architecture for certain workloads, eliminating infrastructure management entirely and aligning costs precisely with actual usage patterns. In a serverless model, the cloud provider dynamically allocates compute resources on a per-request basis, charging only for the actual execution time consumed. This is an attractive proposition for workloads with variable demand profiles, such as event-driven processing, scheduled batch jobs, and API backends with unpredictable traffic. Serverless computing also reduces the operational burden on engineering teams, freeing them to focus on business logic rather than infrastructure provisioning, patching, and scaling. When combined with microservices and modern API gateways, serverless architectures can deliver highly scalable, cost-efficient platforms that align naturally with the consumption-based economics that investors and acquirers increasingly favor.

FAQ

What is legacy software modernization?+

Legacy software modernization is the process of transforming outdated software systems—built on aging architectures, programming languages, or infrastructure—into modern, maintainable platforms that support current business requirements. Modernization strategies range from rehosting (lift and shift) and replatforming to refactoring, re-architecting into microservices, rebuilding from scratch, or replacing with SaaS alternatives. The goal is to reduce technical debt, lower maintenance costs, improve security posture, and enable faster innovation.

How much does legacy software modernization cost?+

The cost varies significantly based on system complexity, chosen strategy, and organizational scale. A rehosting migration may cost hundreds of thousands of dollars, while a full re-architecting or rebuilding effort for a mission-critical enterprise system can run into tens of millions. A credible cost model must include assessment, design, migration execution, testing, training, data migration, and ongoing maintenance—as well as contingency reserves, which typically add 20 to 30 percent to initial estimates.

What are the biggest risks of legacy software modernization?+

The most significant risks include technical debt and undocumented dependencies, data loss during migration from fragmented legacy data stores, compatibility issues between modernized and remaining legacy components, system downtime during cutover events, security vulnerabilities introduced by new APIs and cloud surfaces, compliance updates that shift requirements mid-project, skill gaps on the modernization team, and obsolete hardware dependencies. These risks are interconnected—addressing one in isolation can inadvertently worsen another.

What is the Strangler Pattern in software modernization?+

The Strangler Pattern is a hybrid modernization approach in which legacy functionality is progressively replaced with modern implementations, routing traffic between old and new systems until the legacy platform can be fully decommissioned. Named after the strangler fig that gradually envelops its host tree, this pattern allows organizations to deliver modernization value incrementally, validating each new component in production before proceeding. It dramatically reduces the risk of a failed big-bang cutover and is compatible with microservices decomposition, rebuilding, and SaaS replacement strategies.

How long does legacy software modernization take?+

Timelines depend heavily on the chosen strategy and system complexity. A rehosting migration can be completed in weeks to a few months. Replatforming typically takes three to twelve months. Re-architecting into microservices or rebuilding mission-critical systems commonly spans one to three years or more. Most large enterprise programs use an incremental approach, delivering value in phases of three to six months. Timelines are frequently extended by undiscovered technical debt, data migration complexity, and skill gaps.

What is the difference between rehosting and re-architecting?+

Rehosting (lift and shift) moves an existing application to modern infrastructure—typically cloud—with minimal or no code changes. It is the fastest strategy but does not address application-level technical debt. Re-architecting fundamentally redesigns the application’s structure, most commonly by decomposing a monolith into microservices. Re-architecting delivers far greater long-term benefits—independent scalability, faster deployment cycles, reduced integration risk—but requires significantly more investment, time, and engineering capability.

How does legacy software affect enterprise valuation?+

Legacy software acts as a hidden discount on enterprise value. Sophisticated acquirers conduct technology due diligence that explicitly models the refactoring, re-architecting, or rebuilding costs embedded in a target’s legacy systems and deduct these estimates from their valuation. Systems with severe technical debt, undocumented APIs, obsolete hardware, or security vulnerabilities represent material liabilities. Conversely, sellers who proactively modernize before going to market—replacing legacy architectures with clean, cloud-native platforms—often command meaningful valuation premiums.

Conclusion

Legacy software modernization is not a technology project that happens to have financial consequences—it is a financial decision that happens to involve technology. The distinction matters because it changes who owns the problem, how success is defined, and how risk is managed. Organizations that treat modernization as a purely technical exercise frequently underinvest in assessment, underestimate complexity, and produce outcomes that disappoint both engineering teams and business stakeholders. Organizations that approach it as a capital allocation decision—with the same rigor applied to any major investment—consistently achieve better returns, fewer surprises, and more durable platforms.

For financial advisors, the imperative is clear: legacy technology must be on the agenda of every due diligence process, every value creation plan, and every strategic review. The technical debt embedded in aging systems does not remain static—it compounds. Every quarter of deferred modernization narrows the window for cost-effective intervention and increases the probability of a forced, reactive migration driven by vendor end-of-life, security incident, or regulatory action. Proactive modernization, executed with financial discipline and strategic clarity, converts a liability into an asset: a modern platform that enables faster growth, commands higher market multiples, and reduces the integration friction that destroys value in acquisitions.

The strategies, frameworks, and risk considerations covered in this guide provide a foundation for informed decision-making—but every modernization journey is specific to the organization undertaking it. The right strategy for a financial services firm managing core banking systems is not the right strategy for a manufacturer modernizing MES/ERP platforms or a healthcare organization rationalizing its clinical data infrastructure. What is universal is the need for rigorous assessment, disciplined execution, and sustained executive commitment. Organizations that bring these three elements to their modernization programs—regardless of industry, system complexity, or chosen strategy—are the ones that emerge with technology platforms capable of supporting the next decade of growth. To explore how a development partner can support your modernization program, see our overview of what a software development company does and our guide to how to choose the right one, or explore our enterprise software development services.